OminiControl: Minimal and Universal Control for Diffusion Transformer

Zhenxiong Tan, Songhua Liu, Xingyi Yang, Qiaochu Xue, Xinchao Wang

2024-11-25

Summary

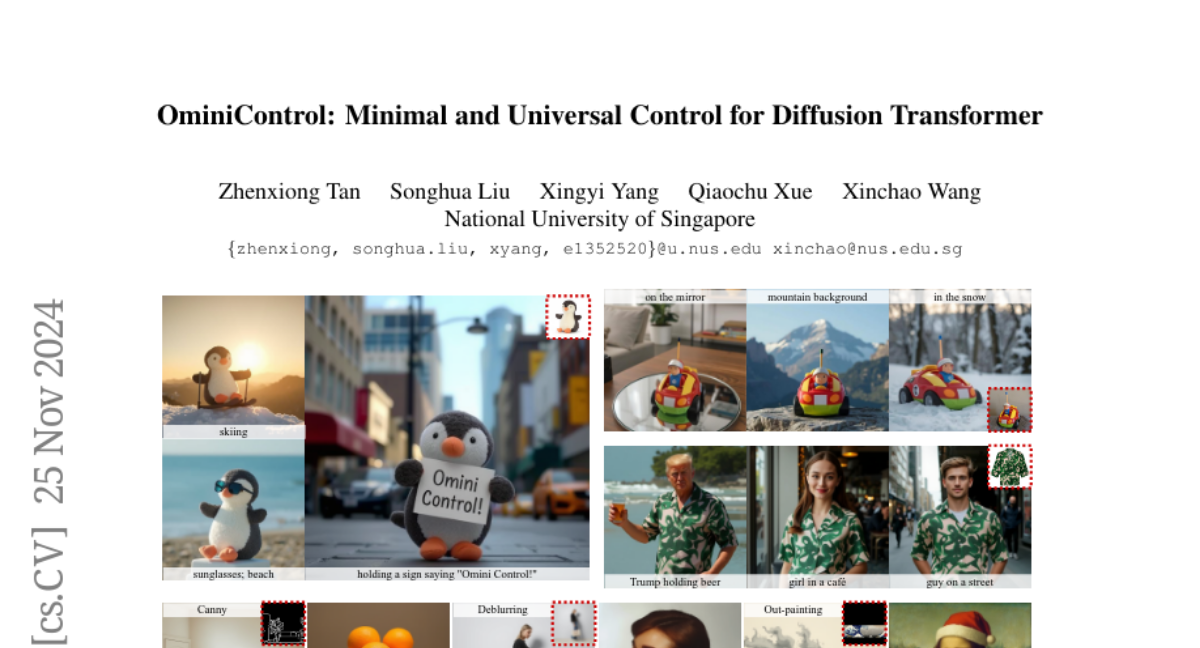

This paper introduces OminiControl, a new framework that enhances the capabilities of Diffusion Transformer (DiT) models by efficiently integrating image conditions for generating images with specific features.

What's the problem?

Current methods for controlling image generation in Diffusion Transformers often require complex additional components, which can be inefficient and cumbersome. These methods struggle to incorporate various image conditions, such as edges or depth, effectively while maintaining high performance without adding too many extra parameters.

What's the solution?

OminiControl solves this problem by using a parameter reuse mechanism that allows the DiT to integrate image conditions directly with minimal additional parameters (only about 0.1%). This framework enables the model to handle a wide range of tasks, such as generating images based on specific subjects or aligning them with spatial features. OminiControl achieves this by training on images generated by the DiT itself, which helps it learn how to create images that reflect specific styles or characteristics more accurately.

Why it matters?

This research is significant because it makes it easier and more efficient to generate high-quality images tailored to specific conditions without needing complicated setups. By simplifying the process and reducing the need for extra components, OminiControl can help artists and developers create more personalized and varied visual content, advancing the field of image generation technology.

Abstract

In this paper, we introduce OminiControl, a highly versatile and parameter-efficient framework that integrates image conditions into pre-trained Diffusion Transformer (DiT) models. At its core, OminiControl leverages a parameter reuse mechanism, enabling the DiT to encode image conditions using itself as a powerful backbone and process them with its flexible multi-modal attention processors. Unlike existing methods, which rely heavily on additional encoder modules with complex architectures, OminiControl (1) effectively and efficiently incorporates injected image conditions with only ~0.1% additional parameters, and (2) addresses a wide range of image conditioning tasks in a unified manner, including subject-driven generation and spatially-aligned conditions such as edges, depth, and more. Remarkably, these capabilities are achieved by training on images generated by the DiT itself, which is particularly beneficial for subject-driven generation. Extensive evaluations demonstrate that OminiControl outperforms existing UNet-based and DiT-adapted models in both subject-driven and spatially-aligned conditional generation. Additionally, we release our training dataset, Subjects200K, a diverse collection of over 200,000 identity-consistent images, along with an efficient data synthesis pipeline to advance research in subject-consistent generation.