OmniConsistency: Learning Style-Agnostic Consistency from Paired Stylization Data

Yiren Song, Cheng Liu, Mike Zheng Shou

2025-05-28

Summary

This paper talks about a new method called OmniConsistency that helps computer programs change the style of images while making sure the important parts of the image stay the same, no matter what style is used.

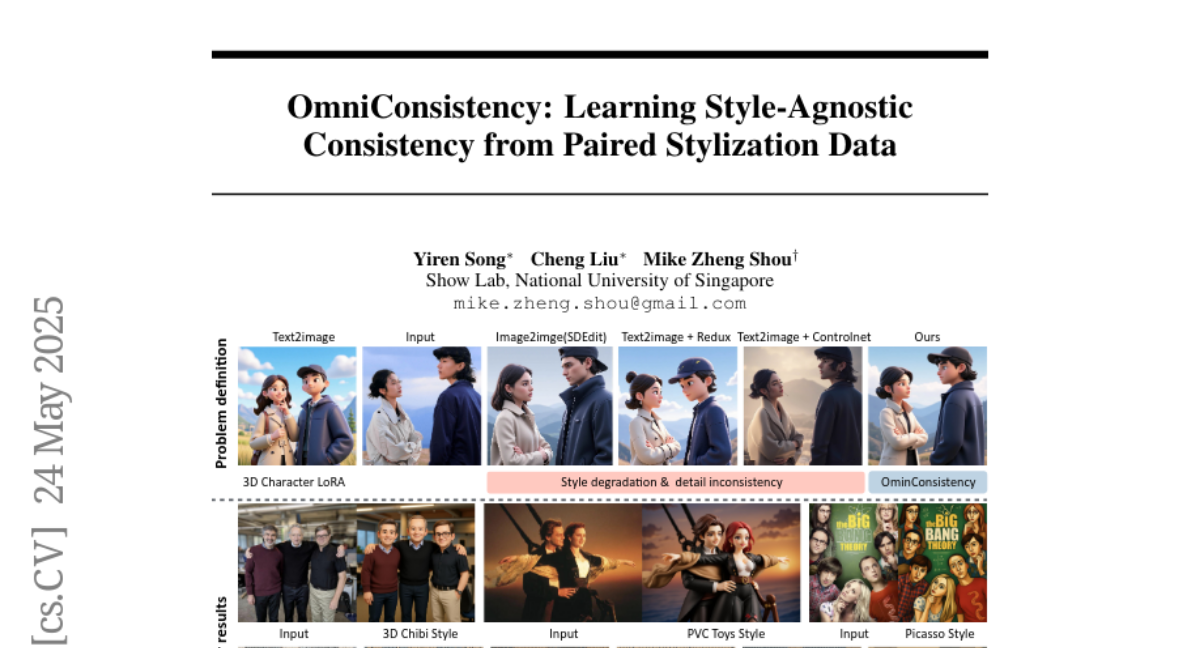

What's the problem?

The problem is that when computers try to change the style of an image, like making a photo look like a painting, they often mess up the details or make the image look weird if you use different styles. It's hard for these programs to keep the main content consistent across many styles.

What's the solution?

The researchers used a special kind of AI called Diffusion Transformers and trained it with lots of pairs of images in different styles. This helped the AI learn how to keep the important parts of the image consistent, even when the style changes, and to do this for any style without losing quality.

Why it matters?

This matters because it means computers can now change images into many different styles without ruining the original content, which is useful for art, design, and creative projects, and it helps make these tools more reliable and flexible for everyone.

Abstract

OmniConsistency, using large-scale Diffusion Transformers, enhances stylization consistency and generalization in image-to-image pipelines without style degradation.