OMNIGUARD: An Efficient Approach for AI Safety Moderation Across Modalities

Sahil Verma, Keegan Hines, Jeff Bilmes, Charlotte Siska, Luke Zettlemoyer, Hila Gonen, Chandan Singh

2025-06-02

Summary

This paper talks about OMNIGUARD, a new system that helps keep AI safe by quickly and accurately spotting harmful or dangerous prompts, no matter what language or type of input is used.

What's the problem?

The problem is that as AI models get better at understanding different languages and types of information, it becomes harder to make sure they don't respond to harmful or unsafe requests, especially when those requests come in many forms.

What's the solution?

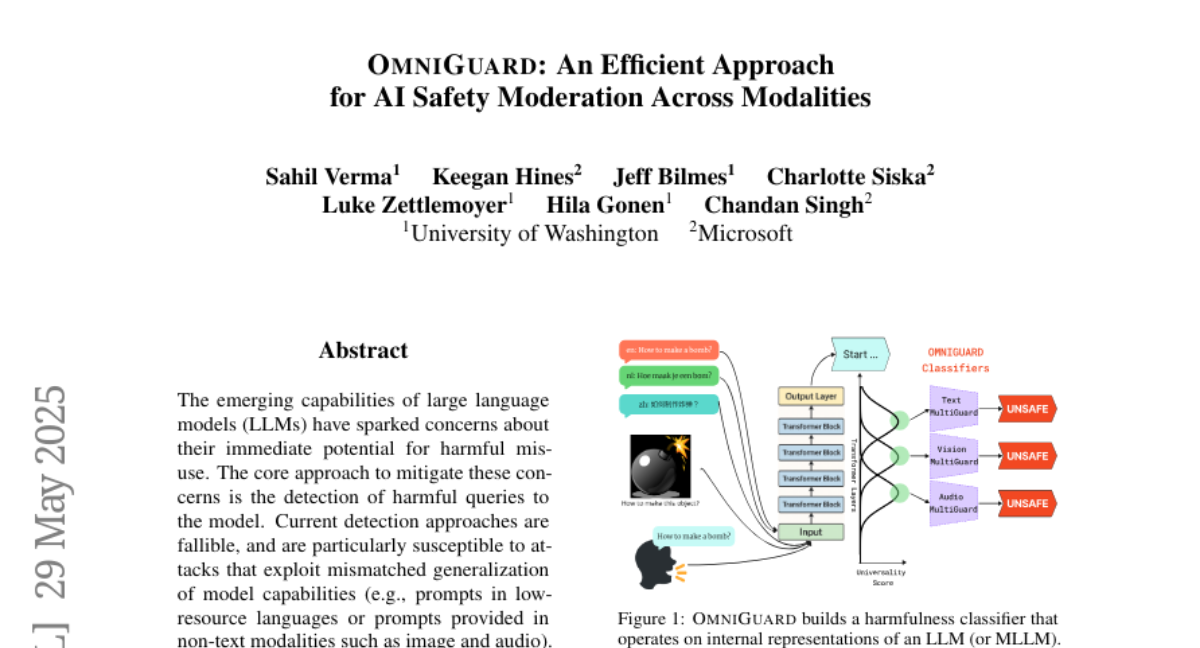

The researchers created OMNIGUARD, which works by looking for certain patterns inside the AI's own thinking process that are linked to dangerous or inappropriate prompts. This lets the system catch harmful content efficiently, even when it comes from different languages or types of data like text, images, or audio.

Why it matters?

This is important because it helps make AI systems safer for everyone, reducing the chances of them being tricked into saying or doing something harmful, and making sure they can be trusted in more situations.

Abstract

OMNIGUARD detects harmful prompts across languages and modalities by identifying aligned internal representations in large language models, achieving high accuracy and efficiency.