On Large Multimodal Models as Open-World Image Classifiers

Alessandro Conti, Massimiliano Mancini, Enrico Fini, Yiming Wang, Paolo Rota, Elisa Ricci

2025-03-31

Summary

This paper is about testing how well AI can identify objects in images without being limited to a specific list of objects it already knows.

What's the problem?

Most tests for AI image recognition only check if the AI can identify things from a predefined list, which isn't how the real world works.

What's the solution?

The researchers created a new way to test AI's ability to classify images in an 'open world' setting, where the AI might encounter objects it's never seen before.

Why it matters?

This work matters because it helps us understand how well AI can really understand images and points the way to making AI that can recognize anything, not just a limited set of things.

Abstract

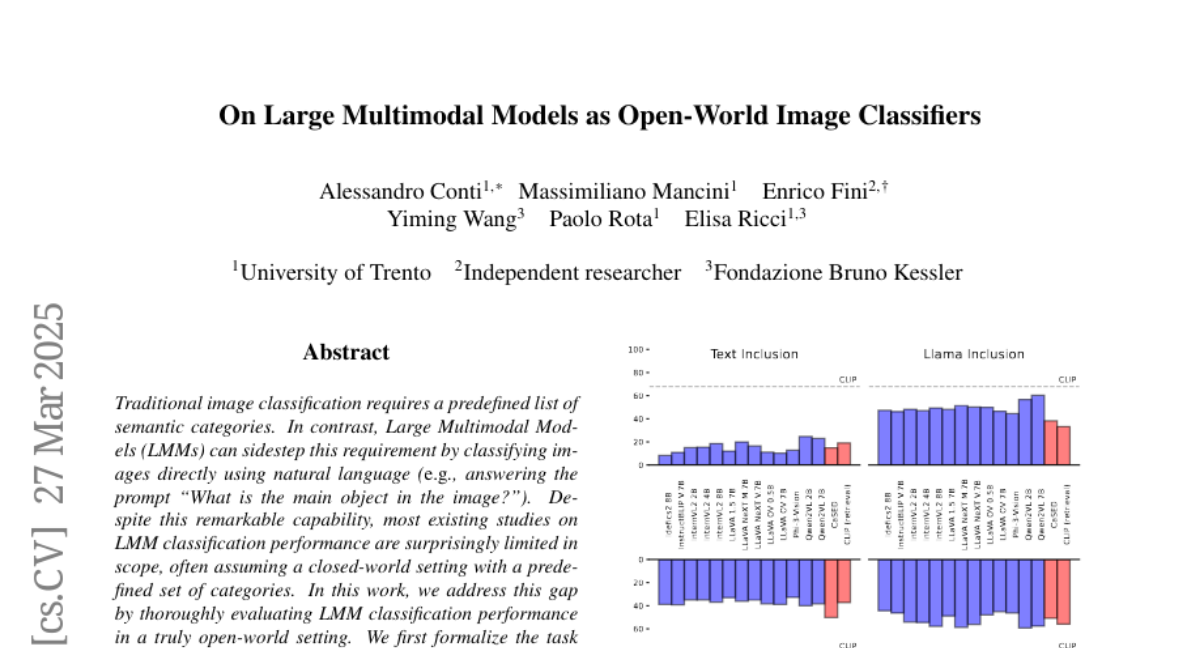

Traditional image classification requires a predefined list of semantic categories. In contrast, Large Multimodal Models (LMMs) can sidestep this requirement by classifying images directly using natural language (e.g., answering the prompt "What is the main object in the image?"). Despite this remarkable capability, most existing studies on LMM classification performance are surprisingly limited in scope, often assuming a closed-world setting with a predefined set of categories. In this work, we address this gap by thoroughly evaluating LMM classification performance in a truly open-world setting. We first formalize the task and introduce an evaluation protocol, defining various metrics to assess the alignment between predicted and ground truth classes. We then evaluate 13 models across 10 benchmarks, encompassing prototypical, non-prototypical, fine-grained, and very fine-grained classes, demonstrating the challenges LMMs face in this task. Further analyses based on the proposed metrics reveal the types of errors LMMs make, highlighting challenges related to granularity and fine-grained capabilities, showing how tailored prompting and reasoning can alleviate them.