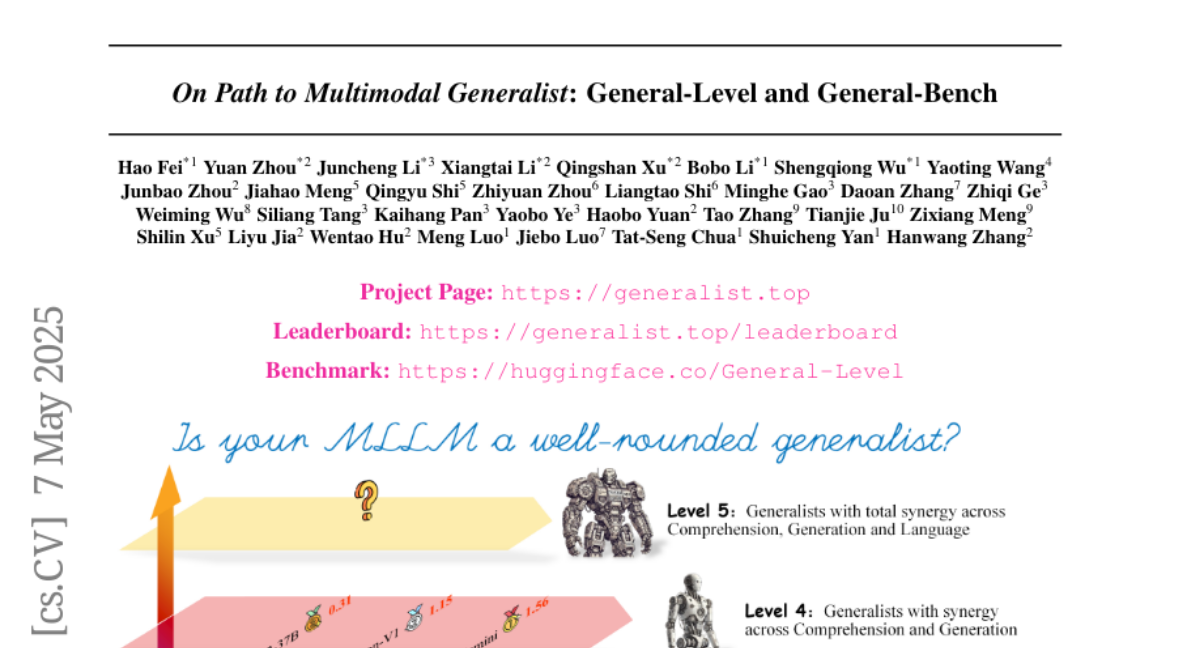

On Path to Multimodal Generalist: General-Level and General-Bench

Hao Fei, Yuan Zhou, Juncheng Li, Xiangtai Li, Qingshan Xu, Bobo Li, Shengqiong Wu, Yaoting Wang, Junbao Zhou, Jiahao Meng, Qingyu Shi, Zhiyuan Zhou, Liangtao Shi, Minghe Gao, Daoan Zhang, Zhiqi Ge, Weiming Wu, Siliang Tang, Kaihang Pan, Yaobo Ye, Haobo Yuan, Tao Zhang

2025-05-09

Summary

This paper talks about a new way to test how well AI models, especially large language models, can handle many different types of information at once, like images, text, and more. The researchers introduce a framework called General-Level that checks if these models are truly generalists, meaning they can understand and create different types of content smoothly.

What's the problem?

The problem is that as AI models get better at dealing with multiple kinds of data, it's hard to know if they're actually good at all of them or just a few. There hasn't been a clear, fair way to measure if an AI is really a 'generalist' that can handle a wide range of tasks and information types at the same time.

What's the solution?

The researchers created the General-Level evaluation framework, which uses a tool called Synergy to test whether AI models can consistently understand and generate different types of content. This setup lets them see if the models are balanced in their abilities, instead of just being strong in one area and weak in others.

Why it matters?

This matters because as AI becomes more involved in everyday life, we need models that can handle all sorts of information, not just one kind. By having a better way to test and compare these generalist models, researchers can build smarter, more reliable AI that can help people in more ways.

Abstract

A General-Level evaluation framework is introduced to assess multimodal generalist performance in LLMs, using Synergy to measure consistent capabilities across comprehension and generation.