On the Limitations of Vision-Language Models in Understanding Image Transforms

Ahmad Mustafa Anis, Hasnain Ali, Saquib Sarfraz

2025-03-14

Summary

This paper explores how well AI models that understand both images and text (Vision Language Models or VLMs) can recognize and understand simple changes made to images.

What's the problem?

VLMs are good at many things, but they often struggle with understanding basic image transformations, such as rotations or color adjustments. This limits their ability to fully 'understand' images.

What's the solution?

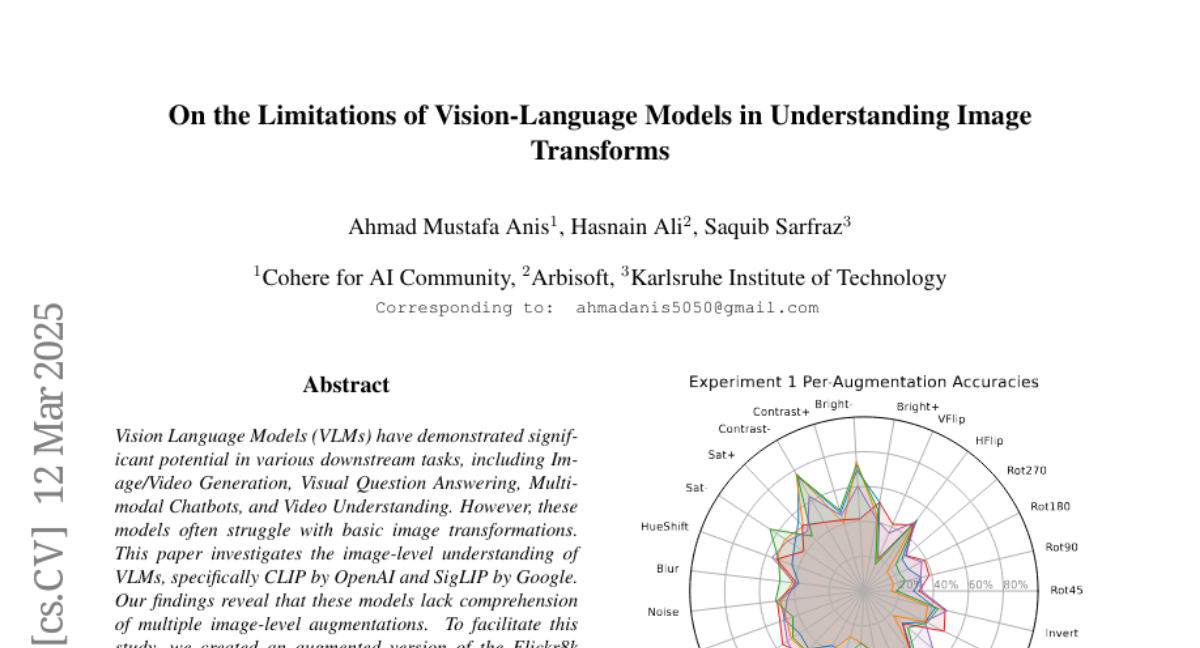

The researchers tested VLMs like CLIP and SigLIP on their ability to understand various image-level changes. They created an altered version of the Flickr8k dataset, adding descriptions of the changes made to each image, and then tested how well the models could understand these changes and how this affects tasks like image editing.

Why it matters?

This work matters because it highlights the limitations of current VLMs in understanding fundamental image properties, which is important for improving their performance in more complex tasks and applications.

Abstract

Vision Language Models (VLMs) have demonstrated significant potential in various downstream tasks, including Image/Video Generation, Visual Question Answering, Multimodal Chatbots, and Video Understanding. However, these models often struggle with basic image transformations. This paper investigates the image-level understanding of VLMs, specifically CLIP by OpenAI and SigLIP by Google. Our findings reveal that these models lack comprehension of multiple image-level augmentations. To facilitate this study, we created an augmented version of the Flickr8k dataset, pairing each image with a detailed description of the applied transformation. We further explore how this deficiency impacts downstream tasks, particularly in image editing, and evaluate the performance of state-of-the-art Image2Image models on simple transformations.