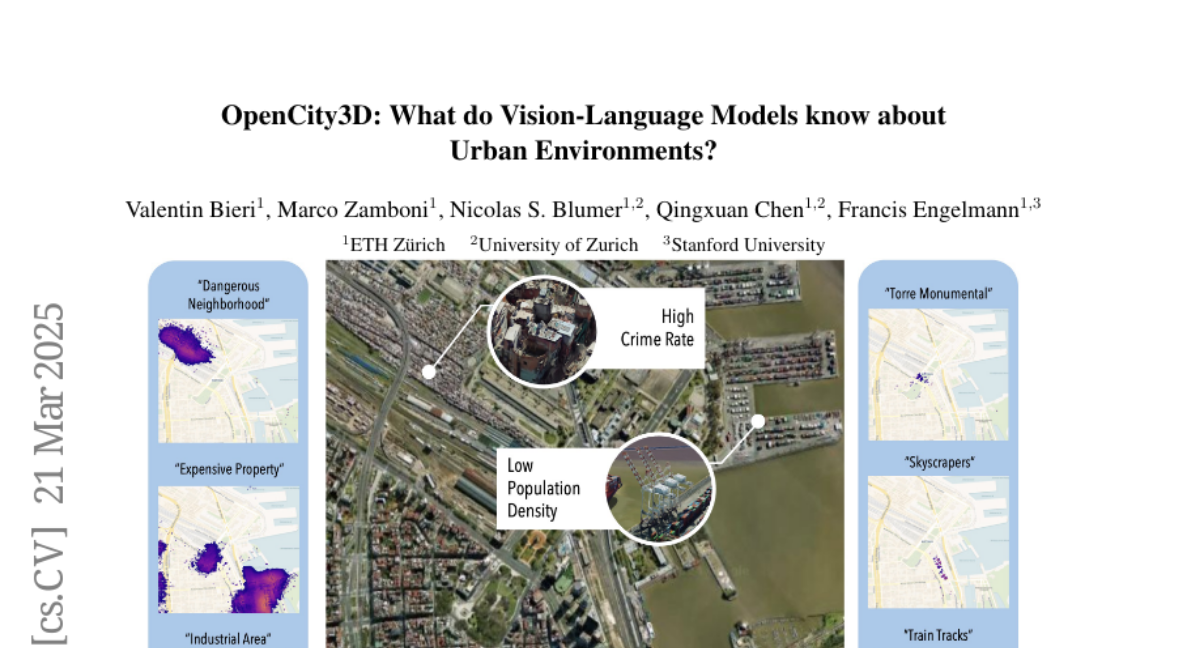

OpenCity3D: What do Vision-Language Models know about Urban Environments?

Valentin Bieri, Marco Zamboni, Nicolas S. Blumer, Qingxuan Chen, Francis Engelmann

2025-03-26

Summary

This paper explores how well AI models that understand both images and language can analyze cities using 3D data from aerial photos.

What's the problem?

AI models are good at understanding indoor spaces and driving, but they haven't been used much to study cities on a large scale.

What's the solution?

The researchers created a new approach called OpenCity3D that uses AI to estimate things like population density, building age, and property prices by looking at 3D models of cities.

Why it matters?

This work matters because it can help city planners, policymakers, and environmental groups make better decisions based on data-driven insights about urban environments.

Abstract

Vision-language models (VLMs) show great promise for 3D scene understanding but are mainly applied to indoor spaces or autonomous driving, focusing on low-level tasks like segmentation. This work expands their use to urban-scale environments by leveraging 3D reconstructions from multi-view aerial imagery. We propose OpenCity3D, an approach that addresses high-level tasks, such as population density estimation, building age classification, property price prediction, crime rate assessment, and noise pollution evaluation. Our findings highlight OpenCity3D's impressive zero-shot and few-shot capabilities, showcasing adaptability to new contexts. This research establishes a new paradigm for language-driven urban analytics, enabling applications in planning, policy, and environmental monitoring. See our project page: opencity3d.github.io