Optimal Stepsize for Diffusion Sampling

Jianning Pei, Han Hu, Shuyang Gu

2025-03-28

Summary

This paper explores a way to make AI image generation faster by figuring out the best way to adjust the steps the AI takes when creating the image.

What's the problem?

AI image generation can be slow because the AI has to take many steps to create a detailed image.

What's the solution?

The researchers developed a method to find the ideal step sizes for the AI to take, making the process much faster without sacrificing image quality.

Why it matters?

This work matters because it can make AI image generation more accessible and practical by significantly reducing the time it takes to create high-quality images.

Abstract

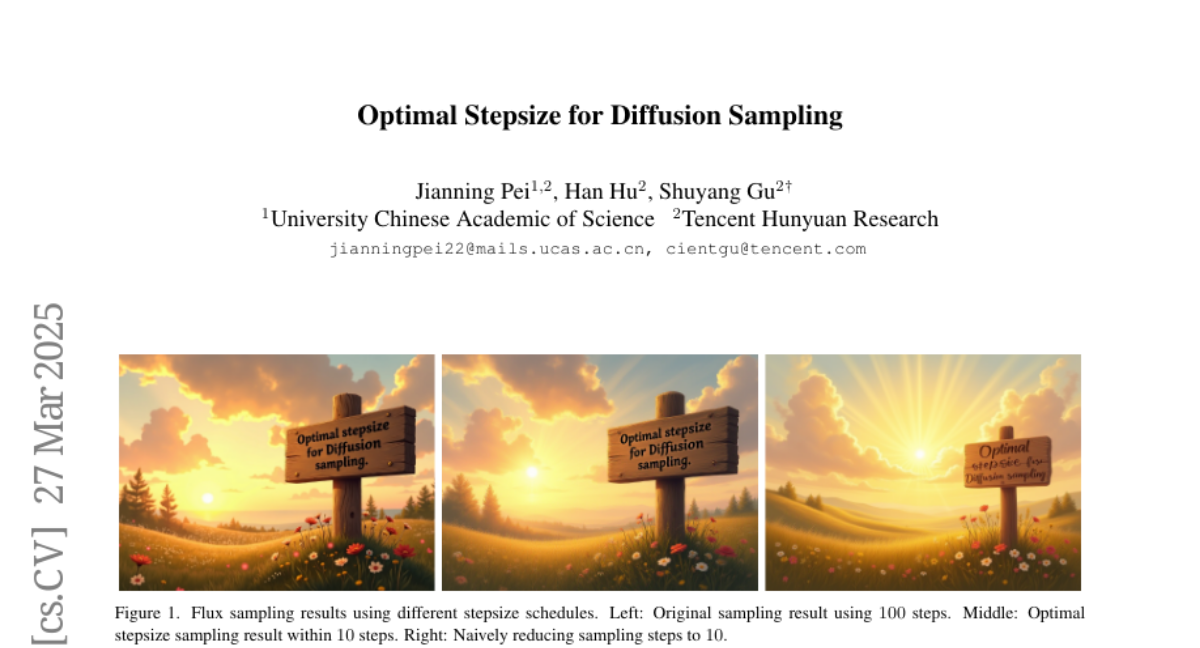

Diffusion models achieve remarkable generation quality but suffer from computational intensive sampling due to suboptimal step discretization. While existing works focus on optimizing denoising directions, we address the principled design of stepsize schedules. This paper proposes Optimal Stepsize Distillation, a dynamic programming framework that extracts theoretically optimal schedules by distilling knowledge from reference trajectories. By reformulating stepsize optimization as recursive error minimization, our method guarantees global discretization bounds through optimal substructure exploitation. Crucially, the distilled schedules demonstrate strong robustness across architectures, ODE solvers, and noise schedules. Experiments show 10x accelerated text-to-image generation while preserving 99.4% performance on GenEval. Our code is available at https://github.com/bebebe666/OptimalSteps.