Optimizing LLMs for Italian: Reducing Token Fertility and Enhancing Efficiency Through Vocabulary Adaptation

Luca Moroni, Giovanni Puccetti, Pere-Lluis Huguet Cabot, Andrei Stefan Bejgu, Edoardo Barba, Alessio Miaschi, Felice Dell'Orletta, Andrea Esuli, Roberto Navigli

2025-04-28

Summary

This paper talks about SAVA, a new method that helps large language models originally made for English work better and more efficiently when used with Italian.

What's the problem?

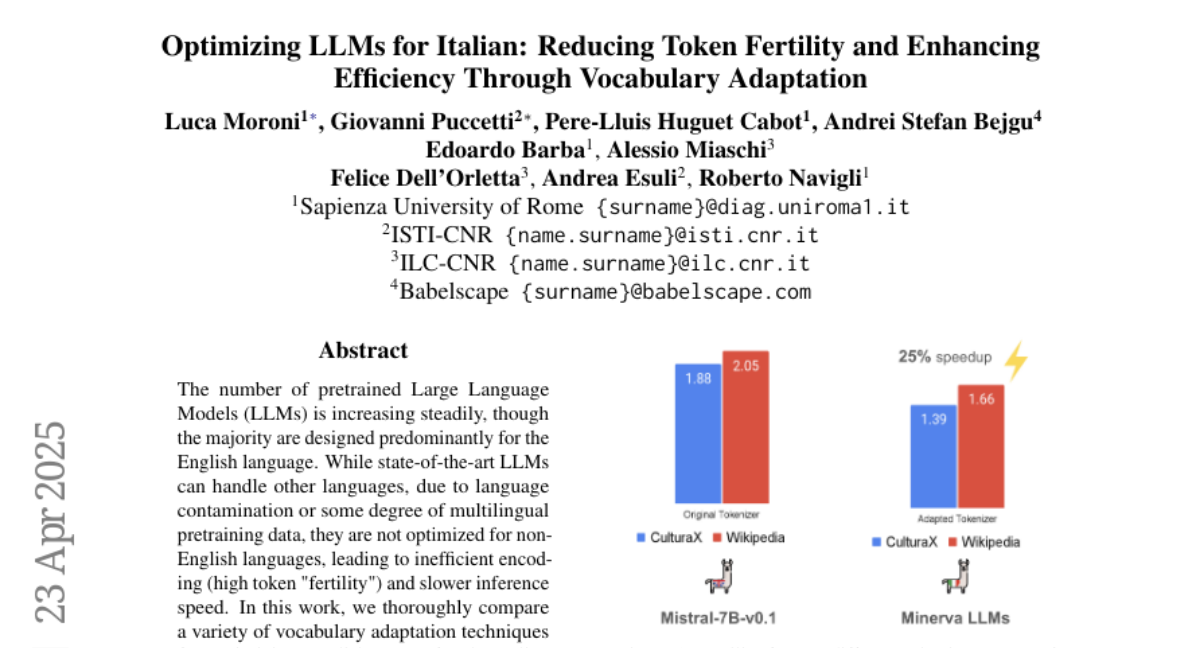

The problem is that when you use English-based language models for Italian, the models often have to break up Italian words into lots of smaller pieces, called tokens, which makes them slower and less accurate. This is because the original model's vocabulary isn't designed for Italian.

What's the solution?

The researchers created SAVA, which is a way to adapt the model's vocabulary using a special neural mapping technique. This lets the model handle Italian words in bigger, more natural chunks, so it doesn't have to split them up as much. As a result, the model becomes faster and works better on Italian tasks, and it only needs a little extra training to get these benefits.

Why it matters?

This matters because it makes AI tools more useful for Italian speakers and helps ensure that language technology works well across different languages, not just English.

Abstract

SAVA, a novel vocabulary adaptation technique using neural mapping, optimizes English LLMs for Italian, reducing token fertility and improving performance across various tasks with minimal additional training.