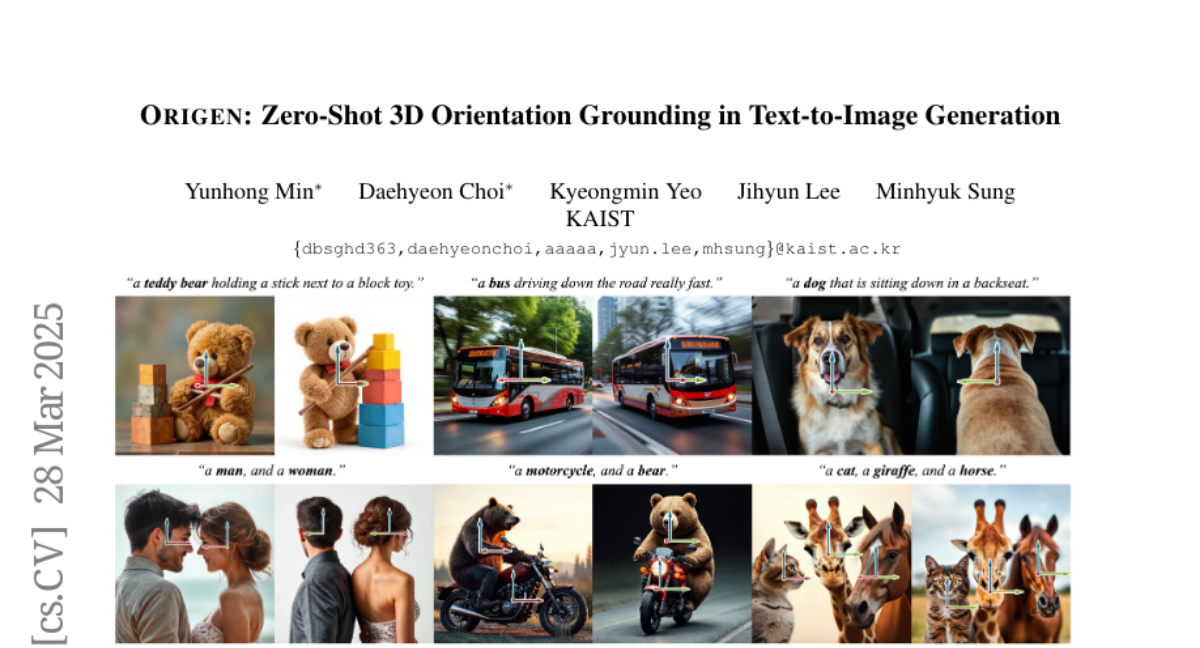

ORIGEN: Zero-Shot 3D Orientation Grounding in Text-to-Image Generation

Yunhong Min, Daehyeon Choi, Kyeongmin Yeo, Jihyun Lee, Minhyuk Sung

2025-03-31

Summary

This paper is about a new way to create images from text that allows you to control the 3D orientation of objects in the image, even if the AI hasn't been specifically trained to do that.

What's the problem?

Existing AI image generators struggle to control the 3D orientation of objects, making it difficult to create realistic or specific scenes.

What's the solution?

The researchers developed a technique called ORIGEN that uses a reward system and a special type of sampling to guide the AI in creating images with the desired 3D orientations.

Why it matters?

This work matters because it allows for more precise control over image generation, opening up new possibilities for creating realistic and customized images.

Abstract

We introduce ORIGEN, the first zero-shot method for 3D orientation grounding in text-to-image generation across multiple objects and diverse categories. While previous work on spatial grounding in image generation has mainly focused on 2D positioning, it lacks control over 3D orientation. To address this, we propose a reward-guided sampling approach using a pretrained discriminative model for 3D orientation estimation and a one-step text-to-image generative flow model. While gradient-ascent-based optimization is a natural choice for reward-based guidance, it struggles to maintain image realism. Instead, we adopt a sampling-based approach using Langevin dynamics, which extends gradient ascent by simply injecting random noise--requiring just a single additional line of code. Additionally, we introduce adaptive time rescaling based on the reward function to accelerate convergence. Our experiments show that ORIGEN outperforms both training-based and test-time guidance methods across quantitative metrics and user studies.