Outlier-Safe Pre-Training for Robust 4-Bit Quantization of Large Language Models

Jungwoo Park, Taewhoo Lee, Chanwoong Yoon, Hyeon Hwang, Jaewoo Kang

2025-06-26

Summary

This paper talks about Outlier-Safe Pre-Training, a method that improves how large language models are compressed into smaller sizes for faster use by controlling extreme values during the training process.

What's the problem?

The problem is that when language models are reduced to use very small bits like 4-bit numbers, extreme values called outliers cause errors and make the model less accurate and harder to use efficiently.

What's the solution?

The researchers developed training techniques that prevent these extreme outliers from happening in the first place during pre-training, making the model safer to compress without losing performance when quantized to very small sizes like 4-bit.

Why it matters?

This matters because it allows powerful language models to run much faster and use less memory on regular devices like phones or laptops while still being accurate, making advanced AI tools more accessible to everyone.

Abstract

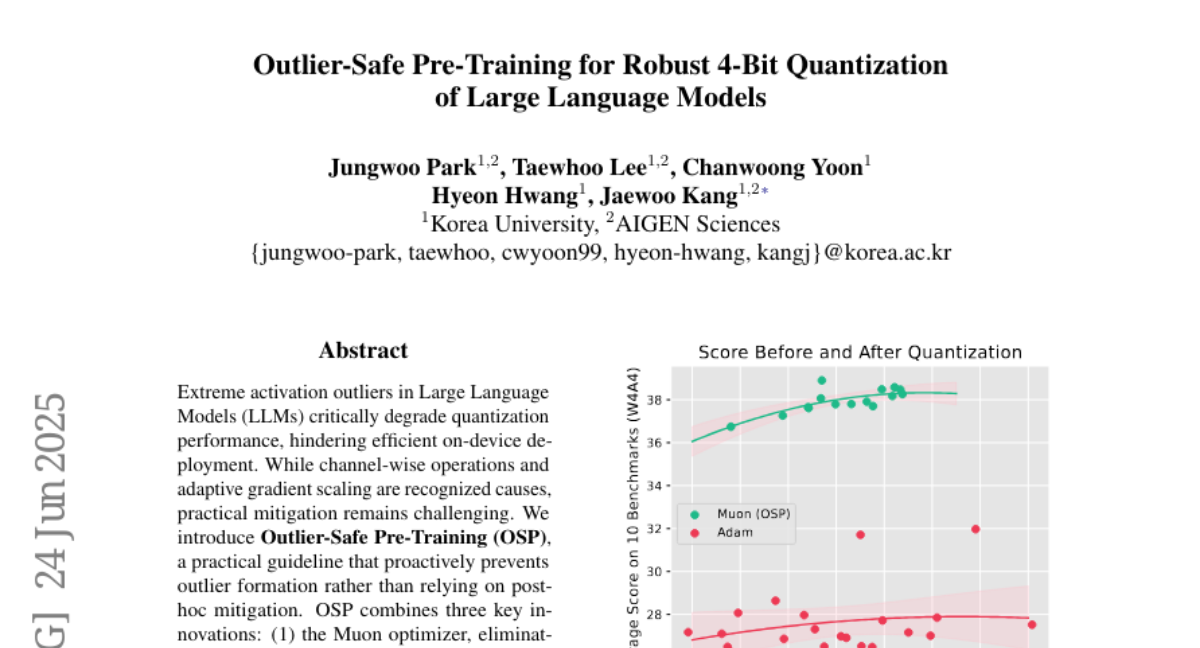

Outlier-Safe Pre-Training improves large language model quantization performance by preventing extreme activation outliers through innovative training techniques.