Overcoming Vocabulary Mismatch: Vocabulary-agnostic Teacher Guided Language Modeling

Haebin Shin, Lei Ji, Xiao Liu, Yeyun Gong

2025-03-26

Summary

This paper is about helping smaller AI language models learn from larger ones, even if they don't use the same words.

What's the problem?

Smaller AI models can learn a lot from larger, more powerful models. However, if the models use different sets of words, it can be difficult for the smaller model to understand and learn from the larger one.

What's the solution?

The researchers developed a new method that helps the smaller model understand the larger model, even if they use different vocabularies. This allows the smaller model to learn more effectively and improve its performance.

Why it matters?

This work matters because it can make it easier to train smaller, more efficient AI language models by leveraging the knowledge of larger, more powerful models.

Abstract

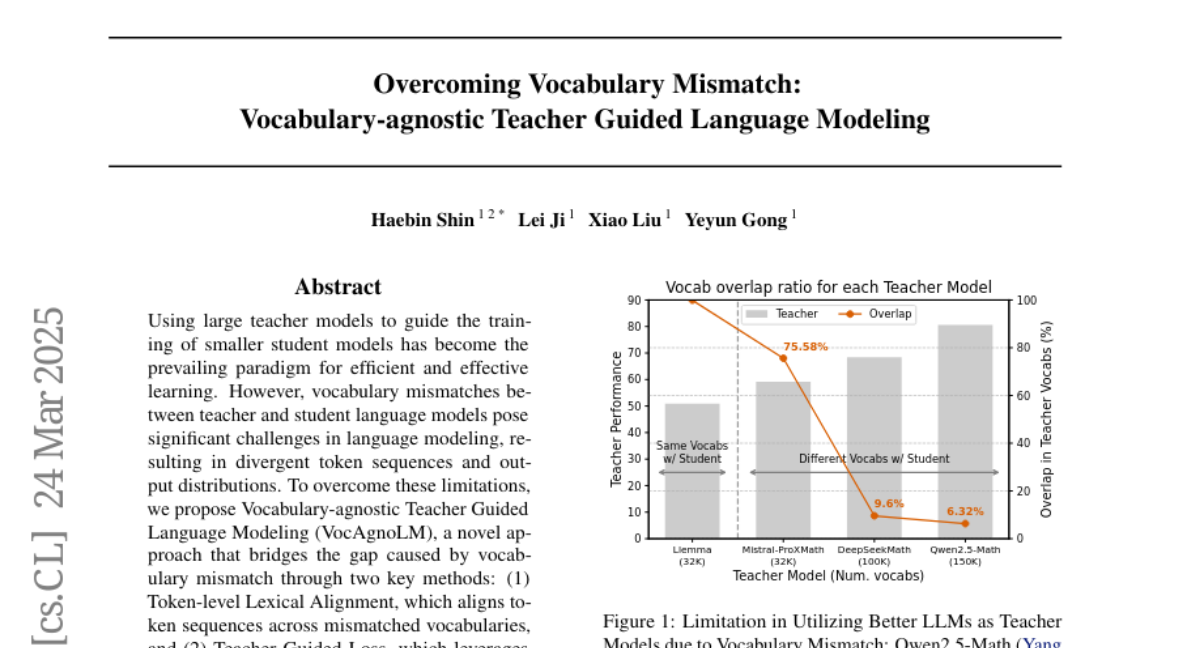

Using large teacher models to guide the training of smaller student models has become the prevailing paradigm for efficient and effective learning. However, vocabulary mismatches between teacher and student language models pose significant challenges in language modeling, resulting in divergent token sequences and output distributions. To overcome these limitations, we propose Vocabulary-agnostic Teacher Guided Language Modeling (VocAgnoLM), a novel approach that bridges the gap caused by vocabulary mismatch through two key methods: (1) Token-level Lexical Alignment, which aligns token sequences across mismatched vocabularies, and (2) Teacher Guided Loss, which leverages the loss of teacher model to guide effective student training. We demonstrate its effectiveness in language modeling with 1B student model using various 7B teacher models with different vocabularies. Notably, with Qwen2.5-Math-Instruct, a teacher model sharing only about 6% of its vocabulary with TinyLlama, VocAgnoLM achieves a 46% performance improvement compared to naive continual pretraining. Furthermore, we demonstrate that VocAgnoLM consistently benefits from stronger teacher models, providing a robust solution to vocabulary mismatches in language modeling.