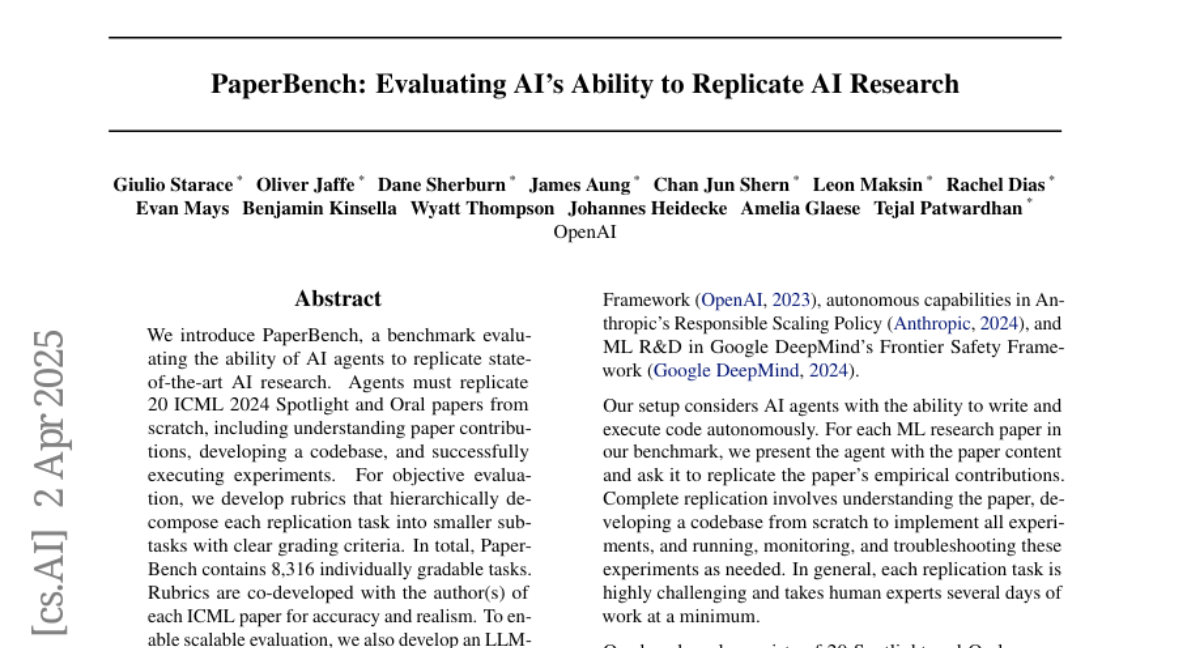

PaperBench: Evaluating AI's Ability to Replicate AI Research

Giulio Starace, Oliver Jaffe, Dane Sherburn, James Aung, Jun Shern Chan, Leon Maksin, Rachel Dias, Evan Mays, Benjamin Kinsella, Wyatt Thompson, Johannes Heidecke, Amelia Glaese, Tejal Patwardhan

2025-04-03

Summary

This paper introduces a way to test if AI can recreate research projects in AI, seeing if it can understand the work, build the software, and run the experiments.

What's the problem?

We need to know if AI can do more than just talk – can it actually understand and reproduce complex AI research?

What's the solution?

The researchers created PaperBench, a set of tests where AI agents have to replicate real research papers. They also developed a way to automatically grade how well the AI does.

Why it matters?

This work matters because it helps us understand how capable AI is at doing actual AI engineering and research, and it provides a way to track progress in this area.

Abstract

We introduce PaperBench, a benchmark evaluating the ability of AI agents to replicate state-of-the-art AI research. Agents must replicate 20 ICML 2024 Spotlight and Oral papers from scratch, including understanding paper contributions, developing a codebase, and successfully executing experiments. For objective evaluation, we develop rubrics that hierarchically decompose each replication task into smaller sub-tasks with clear grading criteria. In total, PaperBench contains 8,316 individually gradable tasks. Rubrics are co-developed with the author(s) of each ICML paper for accuracy and realism. To enable scalable evaluation, we also develop an LLM-based judge to automatically grade replication attempts against rubrics, and assess our judge's performance by creating a separate benchmark for judges. We evaluate several frontier models on PaperBench, finding that the best-performing tested agent, Claude 3.5 Sonnet (New) with open-source scaffolding, achieves an average replication score of 21.0\%. Finally, we recruit top ML PhDs to attempt a subset of PaperBench, finding that models do not yet outperform the human baseline. We https://github.com/openai/preparedness{open-source our code} to facilitate future research in understanding the AI engineering capabilities of AI agents.