PAROAttention: Pattern-Aware ReOrdering for Efficient Sparse and Quantized Attention in Visual Generation Models

Tianchen Zhao, Ke Hong, Xinhao Yang, Xuefeng Xiao, Huixia Li, Feng Ling, Ruiqi Xie, Siqi Chen, Hongyu Zhu, Yichong Zhang, Yu Wang

2025-06-23

Summary

This paper talks about PAROAttention, a new technique that rearranges how an AI model pays attention to visual information to make the process faster and use less memory without losing quality.

What's the problem?

The problem is that attention mechanisms in visual models, especially for high-resolution images and long videos, require a lot of memory and computing power because they have to look at every part of the image or video in detail.

What's the solution?

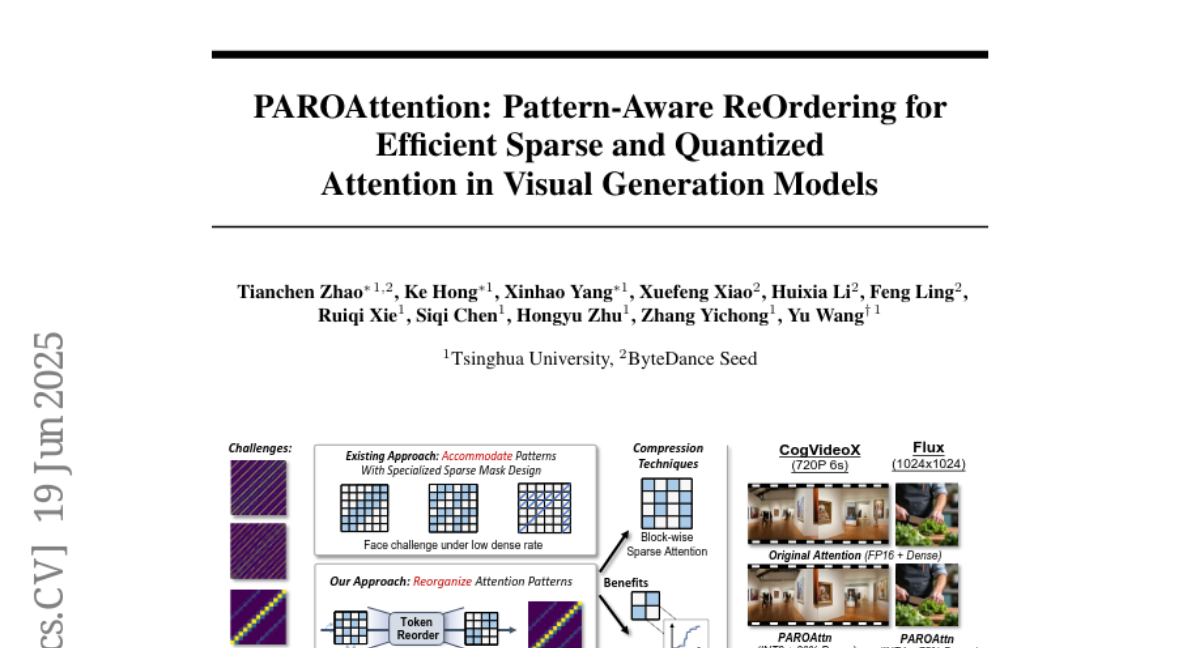

The researchers designed PAROAttention, which reorganizes scattered and irregular attention patterns into a simpler block-wise pattern that is easier for computers to handle. This makes it possible to use methods that skip unnecessary calculations and reduce the detail level without hurting performance, speeding up the whole process by almost two to three times.

Why it matters?

This matters because it allows AI models that create images and videos to run much faster and more efficiently, making advanced visual generation more accessible and practical for real-world use.

Abstract

PAROAttention reorganizes visual attention patterns to enable efficient sparsification and quantization, reducing memory and computational costs with minimal impact on performance.