PEBench: A Fictitious Dataset to Benchmark Machine Unlearning for Multimodal Large Language Models

Zhaopan Xu, Pengfei Zhou, Weidong Tang, Jiaxin Ai, Wangbo Zhao, Xiaojiang Peng, Kai Wang, Yang You, Wenqi Shao, Hongxun Yao, Kaipeng Zhang

2025-03-19

Summary

This paper introduces a new way to test how well AI models can forget specific information, like someone's personal details, when asked to.

What's the problem?

AI models are trained on lots of data, which can include private information. It's important to be able to remove that information so the AI doesn't accidentally reveal it.

What's the solution?

The researchers created a special set of data with fake people and events to test how well different methods can make an AI model forget specific things.

Why it matters?

This work matters because it helps make AI more secure and trustworthy by giving researchers a way to measure and improve how well AI can protect people's privacy.

Abstract

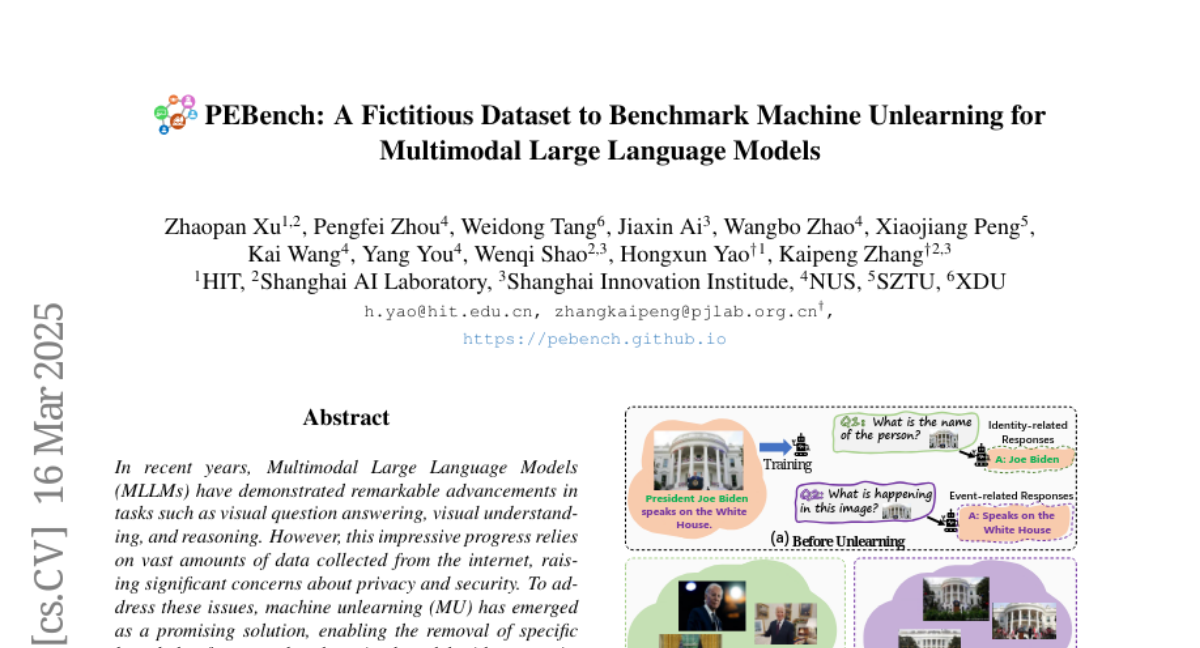

In recent years, Multimodal Large Language Models (MLLMs) have demonstrated remarkable advancements in tasks such as visual question answering, visual understanding, and reasoning. However, this impressive progress relies on vast amounts of data collected from the internet, raising significant concerns about privacy and security. To address these issues, machine unlearning (MU) has emerged as a promising solution, enabling the removal of specific knowledge from an already trained model without requiring retraining from scratch. Although MU for MLLMs has gained attention, current evaluations of its efficacy remain incomplete, and the underlying problem is often poorly defined, which hinders the development of strategies for creating more secure and trustworthy systems. To bridge this gap, we introduce a benchmark, named PEBench, which includes a dataset of personal entities and corresponding general event scenes, designed to comprehensively assess the performance of MU for MLLMs. Through PEBench, we aim to provide a standardized and robust framework to advance research in secure and privacy-preserving multimodal models. We benchmarked 6 MU methods, revealing their strengths and limitations, and shedding light on key challenges and opportunities for MU in MLLMs.