Perception-Aware Policy Optimization for Multimodal Reasoning

Zhenhailong Wang, Xuehang Guo, Sofia Stoica, Haiyang Xu, Hongru Wang, Hyeonjeong Ha, Xiusi Chen, Yangyi Chen, Ming Yan, Fei Huang, Heng Ji

2025-07-10

Summary

This paper talks about PAPO, a new method that improves how AI models understand and reason about images and text together by teaching them to focus better on visual details while learning to solve problems.

What's the problem?

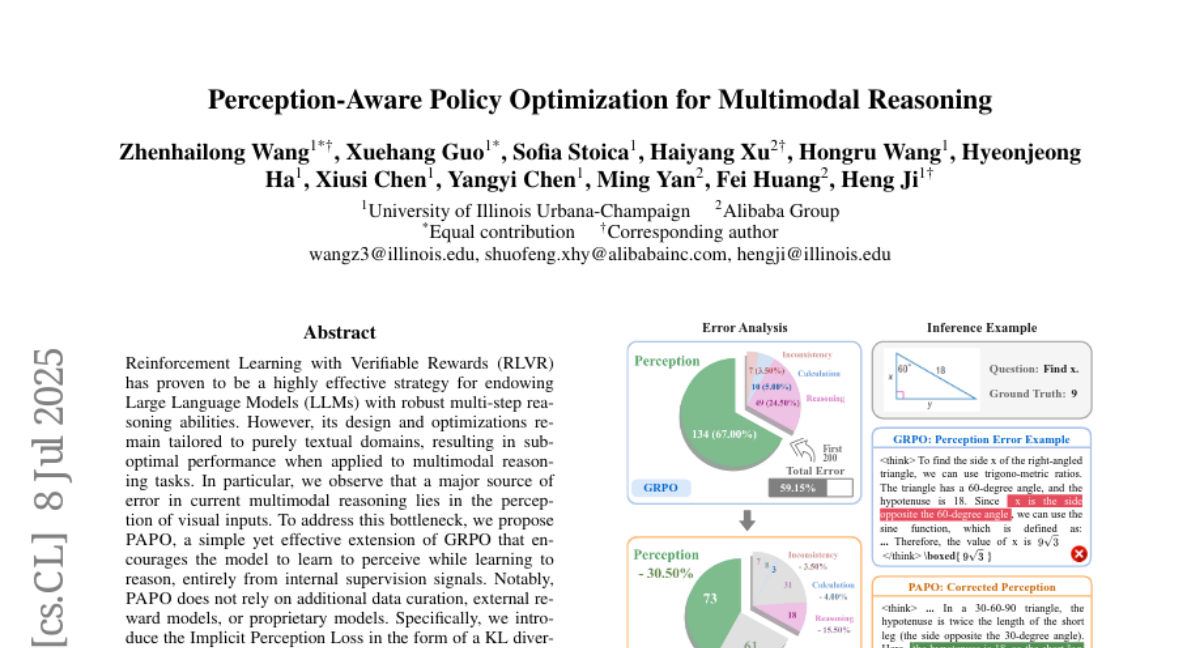

The problem is that current AI models often make mistakes because they don’t perceive visual inputs accurately, even though their reasoning skills might be good. Most existing training methods do not help models improve their perception effectively for tasks that need understanding of images.

What's the solution?

The researchers created PAPO by adding a special loss function called Implicit Perception Loss, which encourages the model to actually pay attention to the image details while learning to reason. They also introduced another technique called Double Entropy Loss to make training more stable. This approach trains the model to see and think better at the same time without using extra data or external models.

Why it matters?

This matters because improving visual perception in AI helps the models perform much better on tasks that rely on understanding images, like answering questions about pictures or interpreting complex scenes, leading to more accurate and reliable AI applications.

Abstract

PAPO, an extension of GRPO, enhances multimodal reasoning by integrating perception-aware supervision, leading to improved performance and reduced perception errors in tasks with high vision dependency.