Perspective-Aware Reasoning in Vision-Language Models via Mental Imagery Simulation

Phillip Y. Lee, Jihyeon Je, Chanho Park, Mikaela Angelina Uy, Leonidas Guibas, Minhyuk Sung

2025-04-25

Summary

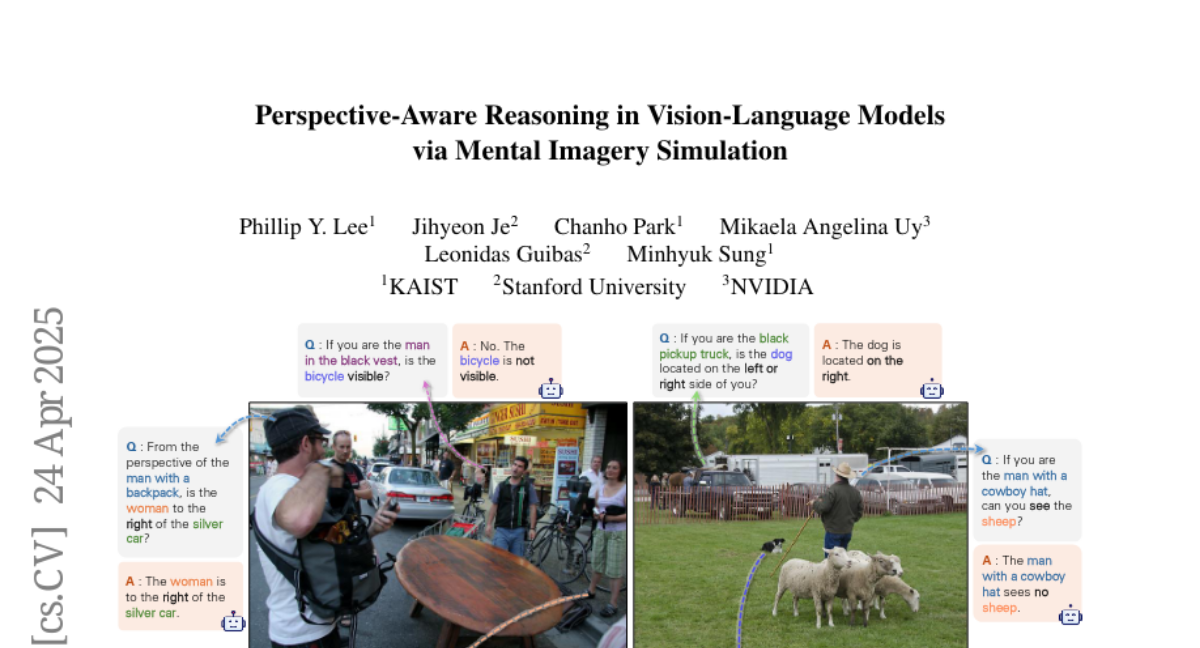

This paper talks about a new method called Abstract Perspective Change (APC) that helps AI models better understand and reason about images and text by teaching them to imagine scenes from different points of view, kind of like putting themselves in someone else’s shoes.

What's the problem?

The problem is that most vision-language models have trouble understanding how things look or change when you see them from a different angle or perspective, which is important for tasks like answering questions about scenes or understanding stories in pictures.

What's the solution?

The researchers used powerful vision models to create simplified versions of scenes, which the AI can then mentally rotate or shift to see from new perspectives. This process helps the model reason more like a human would when thinking about how a scene changes if you move around or look at it differently.

Why it matters?

This matters because it makes AI much smarter and more flexible when dealing with real-world situations, helping it answer questions, describe scenes, or assist people in ways that require understanding things from multiple viewpoints.

Abstract

A framework named Abstract Perspective Change (APC) enhances perspective-aware reasoning in vision-language models by utilizing vision foundation models to create scene abstractions and enable perspective shifts.