PHYSICS: Benchmarking Foundation Models on University-Level Physics Problem Solving

Kaiyue Feng, Yilun Zhao, Yixin Liu, Tianyu Yang, Chen Zhao, John Sous, Arman Cohan

2025-03-31

Summary

This paper is about testing how well AI models can solve university-level physics problems.

What's the problem?

Even though AI is getting really good, it still struggles with complex scientific problems that require advanced knowledge and math skills.

What's the solution?

The researchers created a set of challenging physics problems and used it to evaluate how well different AI models could solve them. They also looked at where the AI models made mistakes and tried different strategies to help them improve.

Why it matters?

This work matters because it helps us understand the limits of AI in solving scientific problems and points the way towards making AI that can assist scientists and engineers.

Abstract

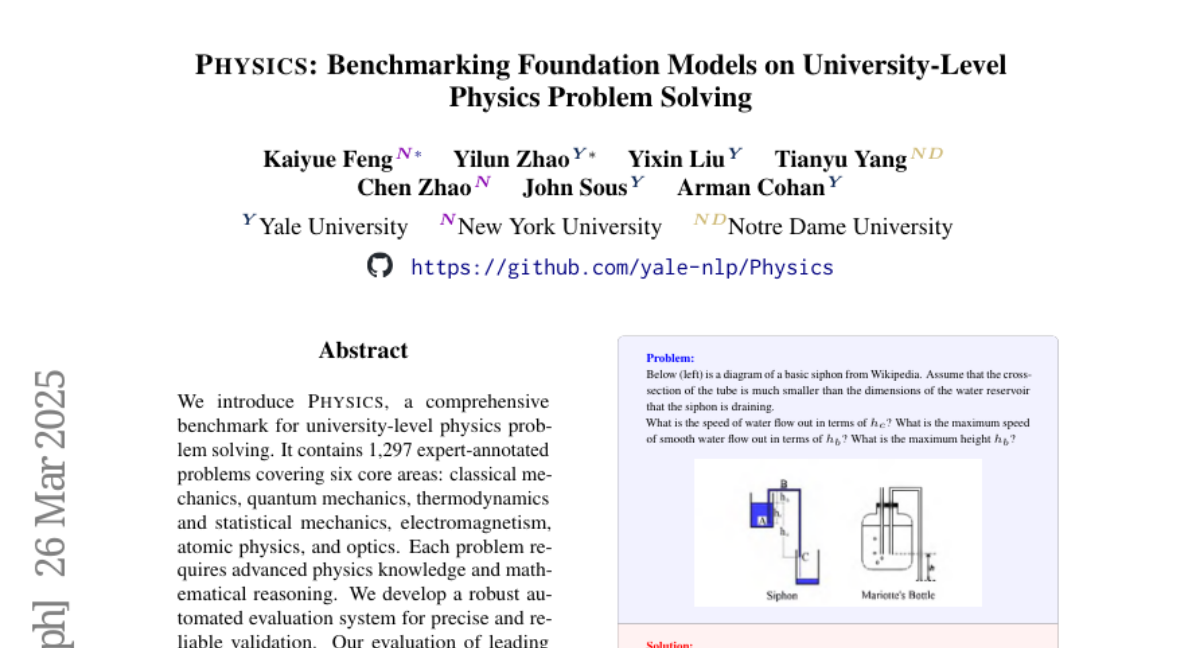

We introduce PHYSICS, a comprehensive benchmark for university-level physics problem solving. It contains 1297 expert-annotated problems covering six core areas: classical mechanics, quantum mechanics, thermodynamics and statistical mechanics, electromagnetism, atomic physics, and optics. Each problem requires advanced physics knowledge and mathematical reasoning. We develop a robust automated evaluation system for precise and reliable validation. Our evaluation of leading foundation models reveals substantial limitations. Even the most advanced model, o3-mini, achieves only 59.9% accuracy, highlighting significant challenges in solving high-level scientific problems. Through comprehensive error analysis, exploration of diverse prompting strategies, and Retrieval-Augmented Generation (RAG)-based knowledge augmentation, we identify key areas for improvement, laying the foundation for future advancements.