Piece it Together: Part-Based Concepting with IP-Priors

Elad Richardson, Kfir Goldberg, Yuval Alaluf, Daniel Cohen-Or

2025-03-14

Summary

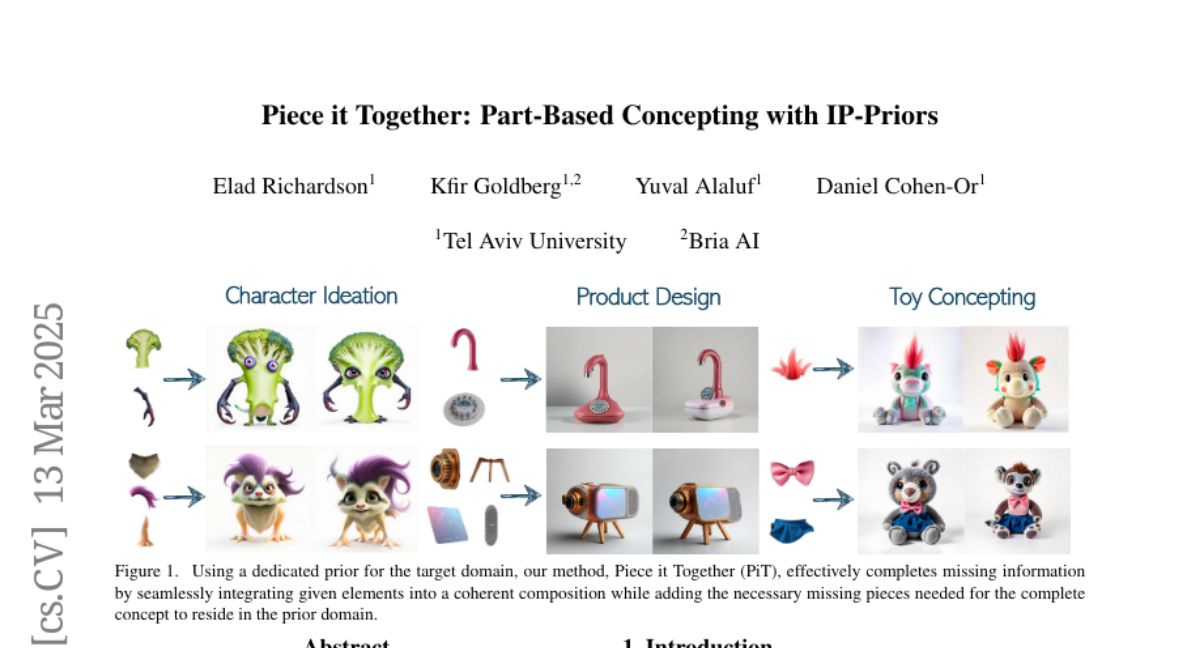

This paper introduces a new image generation technique that lets users combine different visual elements to create coherent images, like piecing together parts of a puzzle.

What's the problem?

Current AI image generators mainly rely on text descriptions, which can be limiting for visual designers who often draw inspiration from existing images or parts of images.

What's the solution?

The researchers developed a system that allows users to input visual components (like a unique wing or hairstyle) and then automatically combines them into a complete and coherent image, while also filling in any missing parts. It's like having an AI assistant that helps you bring your visual ideas to life.

Why it matters?

This work matters because it provides a more intuitive way for designers to create images, moving beyond text descriptions and allowing them to work directly with visual elements to explore their creativity.

Abstract

Advanced generative models excel at synthesizing images but often rely on text-based conditioning. Visual designers, however, often work beyond language, directly drawing inspiration from existing visual elements. In many cases, these elements represent only fragments of a potential concept-such as an uniquely structured wing, or a specific hairstyle-serving as inspiration for the artist to explore how they can come together creatively into a coherent whole. Recognizing this need, we introduce a generative framework that seamlessly integrates a partial set of user-provided visual components into a coherent composition while simultaneously sampling the missing parts needed to generate a plausible and complete concept. Our approach builds on a strong and underexplored representation space, extracted from IP-Adapter+, on which we train IP-Prior, a lightweight flow-matching model that synthesizes coherent compositions based on domain-specific priors, enabling diverse and context-aware generations. Additionally, we present a LoRA-based fine-tuning strategy that significantly improves prompt adherence in IP-Adapter+ for a given task, addressing its common trade-off between reconstruction quality and prompt adherence.