PLADIS: Pushing the Limits of Attention in Diffusion Models at Inference Time by Leveraging Sparsity

Kwanyoung Kim, Byeongsu Sim

2025-03-17

Summary

This paper introduces PLADIS, a method that improves the quality of images generated by AI models using a technique called sparse attention, making the process more efficient.

What's the problem?

AI models that generate images often require extra training or complex calculations to produce high-quality results. This can be time-consuming and resource-intensive, especially for models already optimized for speed.

What's the solution?

PLADIS enhances pre-trained AI models by focusing on the most important parts of the image generation process using sparse attention. This allows the models to generate better images without needing additional training or complex calculations.

Why it matters?

This work matters because it provides a simple and efficient way to improve the performance of AI image generation models, making them more effective and accessible.

Abstract

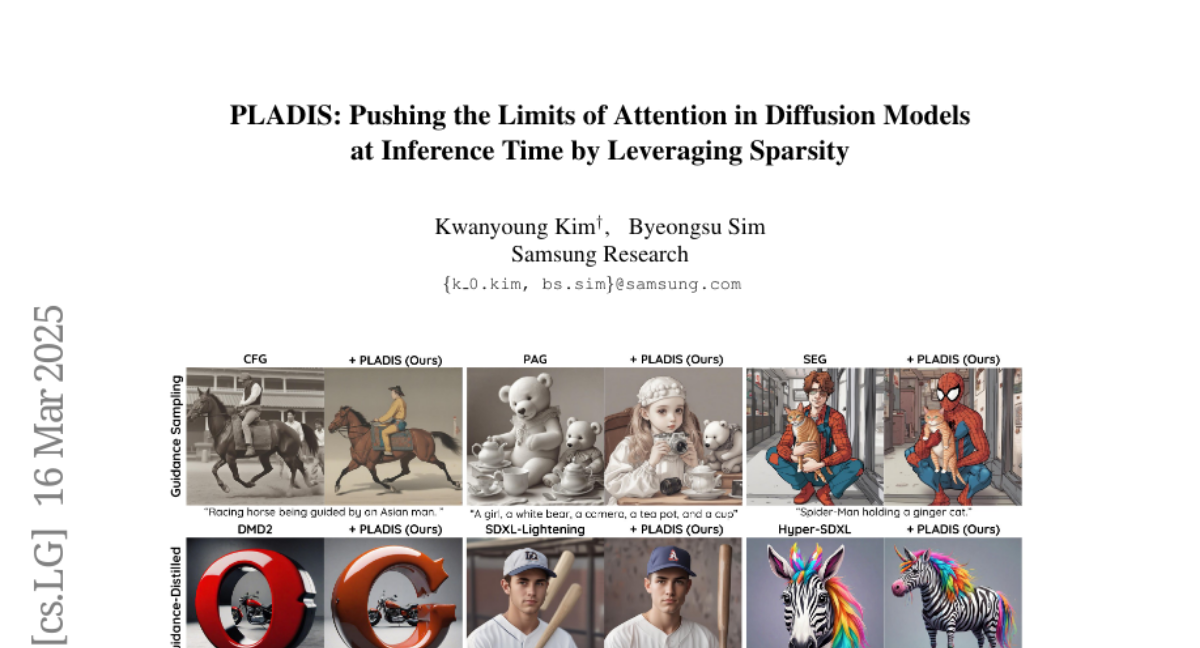

Diffusion models have shown impressive results in generating high-quality conditional samples using guidance techniques such as Classifier-Free Guidance (CFG). However, existing methods often require additional training or neural function evaluations (NFEs), making them incompatible with guidance-distilled models. Also, they rely on heuristic approaches that need identifying target layers. In this work, we propose a novel and efficient method, termed PLADIS, which boosts pre-trained models (U-Net/Transformer) by leveraging sparse attention. Specifically, we extrapolate query-key correlations using softmax and its sparse counterpart in the cross-attention layer during inference, without requiring extra training or NFEs. By leveraging the noise robustness of sparse attention, our PLADIS unleashes the latent potential of text-to-image diffusion models, enabling them to excel in areas where they once struggled with newfound effectiveness. It integrates seamlessly with guidance techniques, including guidance-distilled models. Extensive experiments show notable improvements in text alignment and human preference, offering a highly efficient and universally applicable solution.