Progressive Language-guided Visual Learning for Multi-Task Visual Grounding

Jingchao Wang, Hong Wang, Wenlong Zhang, Kunhua Ji, Dingjiang Huang, Yefeng Zheng

2025-04-24

Summary

This paper talks about a new AI framework that helps computers better understand and connect language with specific parts of images, making it easier for them to figure out exactly what a sentence is talking about in a picture.

What's the problem?

The problem is that most AI systems struggle to accurately match words or phrases to the right objects or regions in an image, especially when the tasks get more complicated or when there are several related tasks to solve at once.

What's the solution?

The researchers designed a system that gradually teaches the AI to use language information as it looks at images, letting it share what it learns across different but related tasks. This approach helps the AI get better at both understanding which object a sentence refers to and outlining the exact area of that object in the image.

Why it matters?

This matters because it leads to smarter AI that can handle more complex and realistic situations, like helping people find things in photos, assisting the visually impaired, or making robots better at following spoken instructions in the real world.

Abstract

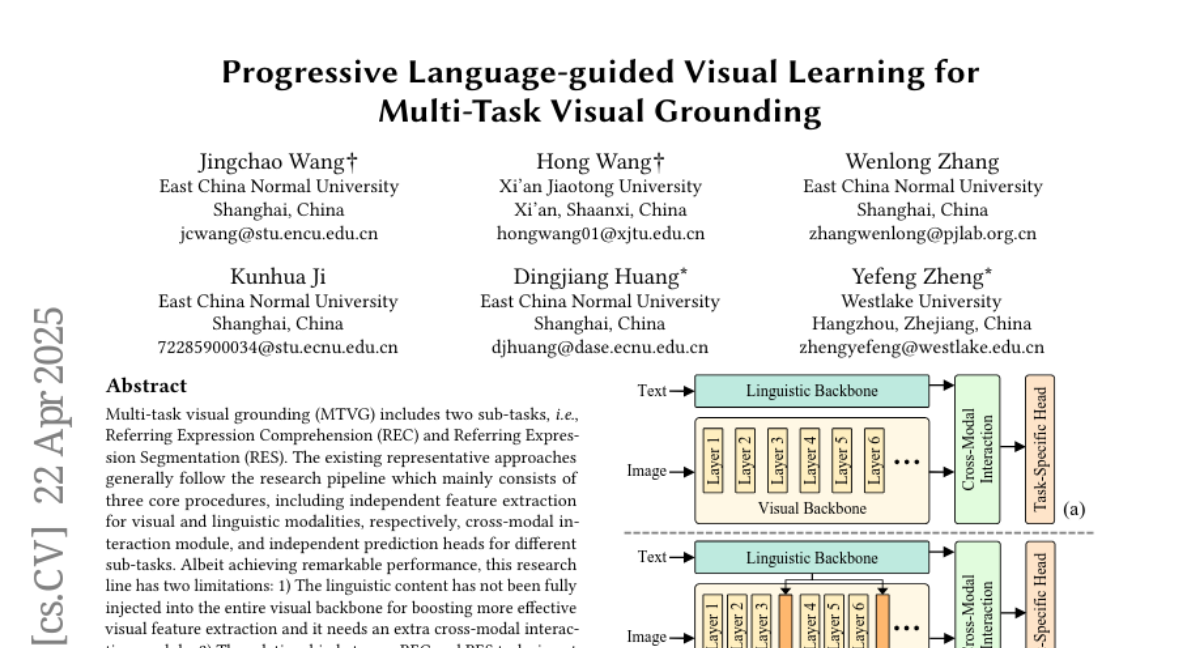

A Progressive Language-guided Visual Learning framework for multi-task visual grounding that integrates linguistic information into the visual backbone and leverages shared features between sub-tasks to improve performance in both Referring Expression Comprehension and Referring Expression Segmentation.