ProJudge: A Multi-Modal Multi-Discipline Benchmark and Instruction-Tuning Dataset for MLLM-based Process Judges

Jiaxin Ai, Pengfei Zhou, Zhaopan Xu, Ming Li, Fanrui Zhang, Zizhen Li, Jianwen Sun, Yukang Feng, Baojin Huang, Zhongyuan Wang, Kaipeng Zhang

2025-03-17

Summary

This paper introduces ProJudgeBench, a new way to test how well AI models can judge the reasoning process of other AI models solving scientific problems. It also provides a dataset to help improve these 'judge' models.

What's the problem?

AI models often make mistakes when solving scientific problems. It's hard to know if their reasoning is correct, and it's time-consuming and expensive to have humans check every step.

What's the solution?

The researchers created a benchmark, ProJudgeBench, with lots of scientific problems and detailed step-by-step answers. They also created a dataset, ProJudge-173k, to train AI models to act as 'judges' that can evaluate the reasoning process of other AI models. They used a special training technique to make these judge models better at understanding the problem-solving steps.

Why it matters?

This work matters because it helps improve the reliability of AI models by creating a way to automatically evaluate their reasoning. This can lead to more trustworthy AI systems for scientific tasks.

Abstract

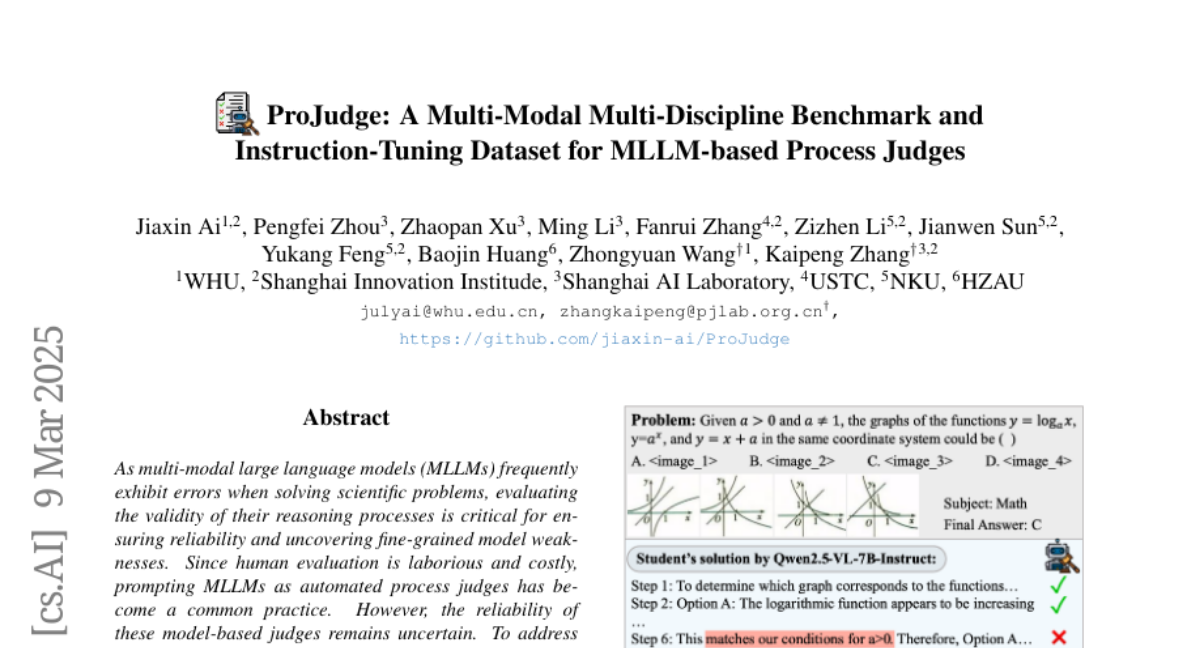

As multi-modal large language models (MLLMs) frequently exhibit errors when solving scientific problems, evaluating the validity of their reasoning processes is critical for ensuring reliability and uncovering fine-grained model weaknesses. Since human evaluation is laborious and costly, prompting MLLMs as automated process judges has become a common practice. However, the reliability of these model-based judges remains uncertain. To address this, we introduce ProJudgeBench, the first comprehensive benchmark specifically designed for evaluating abilities of MLLM-based process judges. ProJudgeBench comprises 2,400 test cases and 50,118 step-level labels, spanning four scientific disciplines with diverse difficulty levels and multi-modal content. In ProJudgeBench, each step is meticulously annotated by human experts for correctness, error type, and explanation, enabling a systematic evaluation of judges' capabilities to detect, classify and diagnose errors. Evaluation on ProJudgeBench reveals a significant performance gap between open-source and proprietary models. To bridge this gap, we further propose ProJudge-173k, a large-scale instruction-tuning dataset, and a Dynamic Dual-Phase fine-tuning strategy that encourages models to explicitly reason through problem-solving before assessing solutions. Both contributions significantly enhance the process evaluation capabilities of open-source models. All the resources will be released to foster future research of reliable multi-modal process evaluation.