Prompt Candidates, then Distill: A Teacher-Student Framework for LLM-driven Data Annotation

Mingxuan Xia, Haobo Wang, Yixuan Li, Zewei Yu, Jindong Wang, Junbo Zhao, Runze Wu

2025-06-16

Summary

This paper talks about a new way to make data labeling better using a teacher-student setup with large language models (LLMs). It encourages the model to suggest several possible answers when it’s unsure, rather than just one, and then uses a special process to pick the best labels. This helps create higher-quality data labels for training other AI systems.

What's the problem?

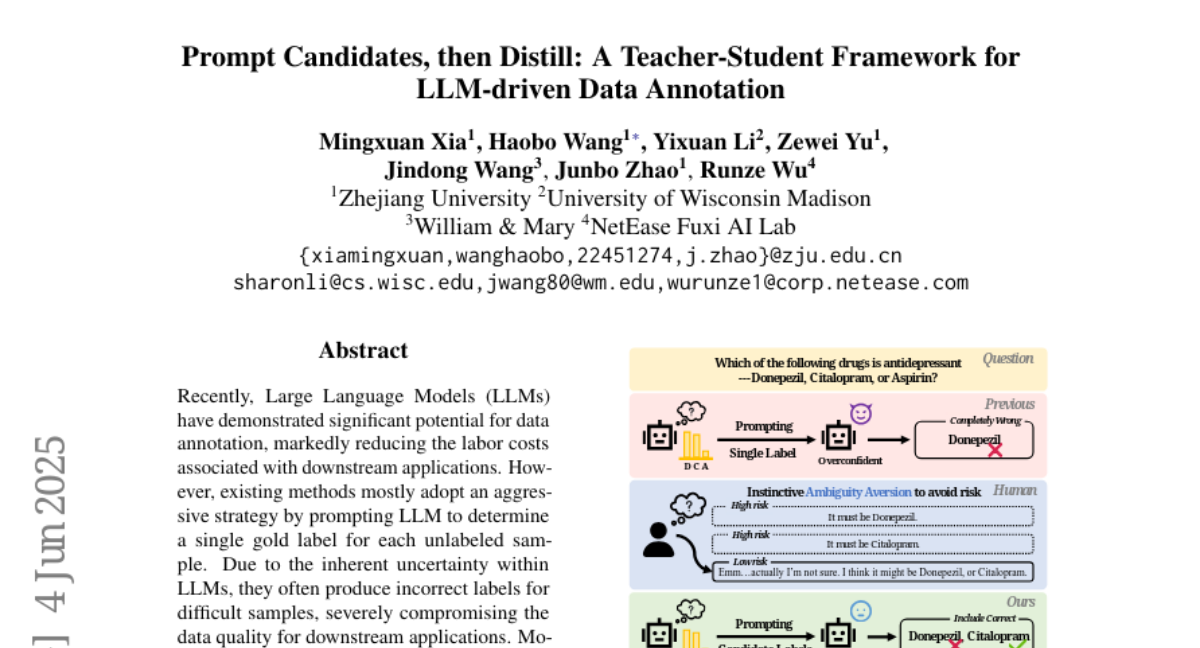

The problem is that when AI models label data for use in other tasks, they often give only one answer even if they aren’t sure, which can lead to mistakes and lower quality labels. This makes it harder for AI systems trained on this data to work well, because bad or uncertain labels confuse them.

What's the solution?

The solution was to design a teacher-student framework where the ‘teacher’ model first produces multiple possible labels for each data point, reflecting its uncertainty. Then the ‘student’ model learns from these multiple candidates and distills them into better, more reliable labels. This process improves the quality of annotations by capturing uncertainty and refining the final labels based on that.

Why it matters?

This matters because data annotation is crucial for training AI applications, and better labels lead to smarter and more accurate AI systems. By allowing models to express uncertainty and refining labels accordingly, this approach helps improve the overall reliability and performance of AI in many future tasks.

Abstract

A novel candidate annotation paradigm using a teacher-student framework improves data quality for下游 applications by encouraging large language models to output multiple labels when uncertain.