QuartDepth: Post-Training Quantization for Real-Time Depth Estimation on the Edge

Xuan Shen, Weize Ma, Jing Liu, Changdi Yang, Rui Ding, Quanyi Wang, Henghui Ding, Wei Niu, Yanzhi Wang, Pu Zhao, Jun Lin, Jiuxiang Gu

2025-03-25

Summary

This paper is about making AI models that estimate depth from images run faster and use less memory on devices with limited resources, like phones or specialized computer chips.

What's the problem?

AI models for depth estimation are often too big and slow to run in real-time on devices with limited computing power.

What's the solution?

The researchers developed a method called QuartDepth that shrinks the size of the AI model and makes it faster by reducing the precision of the numbers it uses. They also designed a special hardware accelerator to further improve performance.

Why it matters?

This work matters because it makes it possible to use depth estimation in real-world applications on devices with limited resources, which could be useful for things like robotics, augmented reality, and self-driving cars.

Abstract

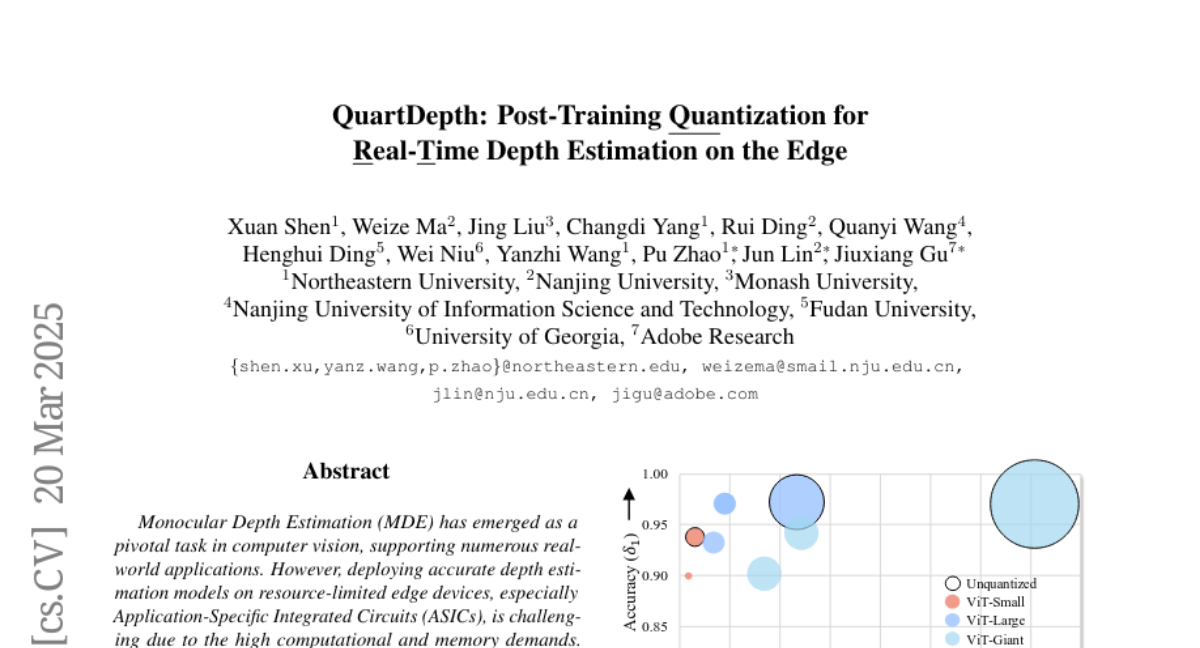

Monocular Depth Estimation (MDE) has emerged as a pivotal task in computer vision, supporting numerous real-world applications. However, deploying accurate depth estimation models on resource-limited edge devices, especially Application-Specific Integrated Circuits (ASICs), is challenging due to the high computational and memory demands. Recent advancements in foundational depth estimation deliver impressive results but further amplify the difficulty of deployment on ASICs. To address this, we propose QuartDepth which adopts post-training quantization to quantize MDE models with hardware accelerations for ASICs. Our approach involves quantizing both weights and activations to 4-bit precision, reducing the model size and computation cost. To mitigate the performance degradation, we introduce activation polishing and compensation algorithm applied before and after activation quantization, as well as a weight reconstruction method for minimizing errors in weight quantization. Furthermore, we design a flexible and programmable hardware accelerator by supporting kernel fusion and customized instruction programmability, enhancing throughput and efficiency. Experimental results demonstrate that our framework achieves competitive accuracy while enabling fast inference and higher energy efficiency on ASICs, bridging the gap between high-performance depth estimation and practical edge-device applicability. Code: https://github.com/shawnricecake/quart-depth