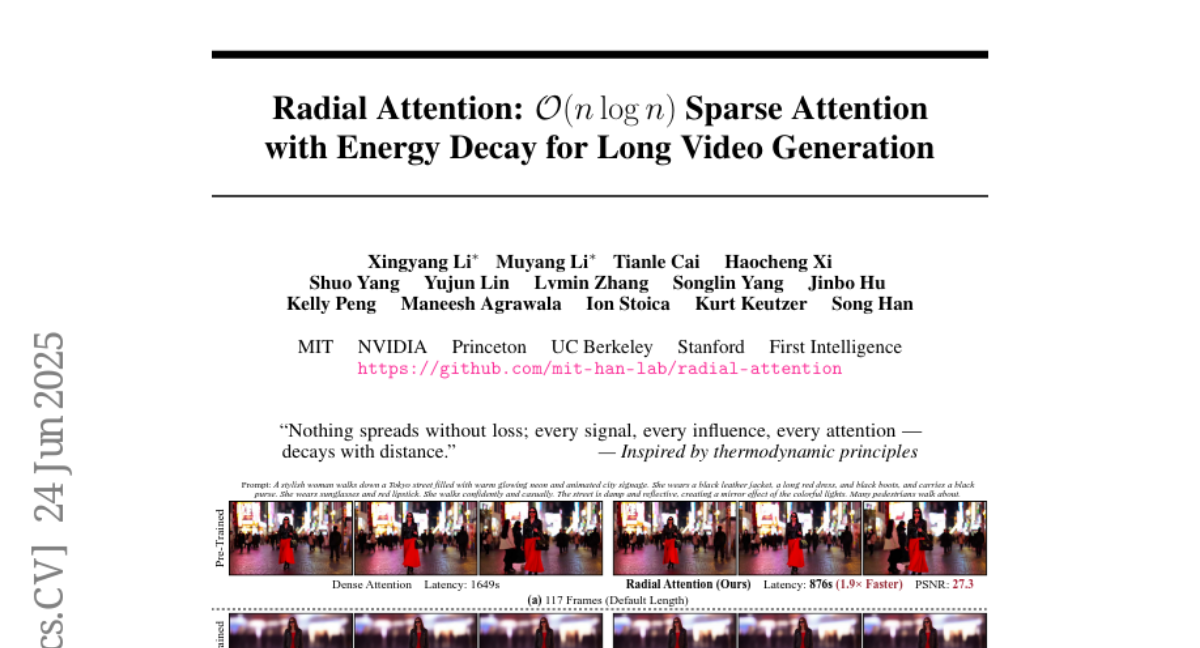

Radial Attention: O(nlog n) Sparse Attention with Energy Decay for Long Video Generation

Xingyang Li, Muyang Li, Tianle Cai, Haocheng Xi, Shuo Yang, Yujun Lin, Lvmin Zhang, Songlin Yang, Jinbo Hu, Kelly Peng, Maneesh Agrawala, Ion Stoica, Kurt Keutzer, Song Han

2025-07-02

Summary

This paper talks about Radial Attention, a new way to improve how computers generate long videos. It focuses on reducing the amount of work the computer has to do while still keeping the video quality high by using a special pattern inspired by how energy fades over time and space.

What's the problem?

The problem is that making long videos with AI models takes a lot of computing power because the model has to think about many parts of the video all at once, which is very slow and expensive.

What's the solution?

The researchers designed Radial Attention, which limits the model's focus to nearby parts of the video more strongly and gradually pays less attention to parts that are farther away. This method uses less computing power and speeds up both training and video generation while keeping the videos looking good.

Why it matters?

This matters because it makes it possible to create longer and better-quality videos efficiently, which can help in entertainment, education, and other fields using AI-generated videos without needing huge amounts of computer resources.

Abstract

Radial Attention, a scalable sparse attention mechanism, improves efficiency and preserves video quality in diffusion models by leveraging spatiotemporal energy decay.