Reangle-A-Video: 4D Video Generation as Video-to-Video Translation

Hyeonho Jeong, Suhyeon Lee, Jong Chul Ye

2025-03-13

Summary

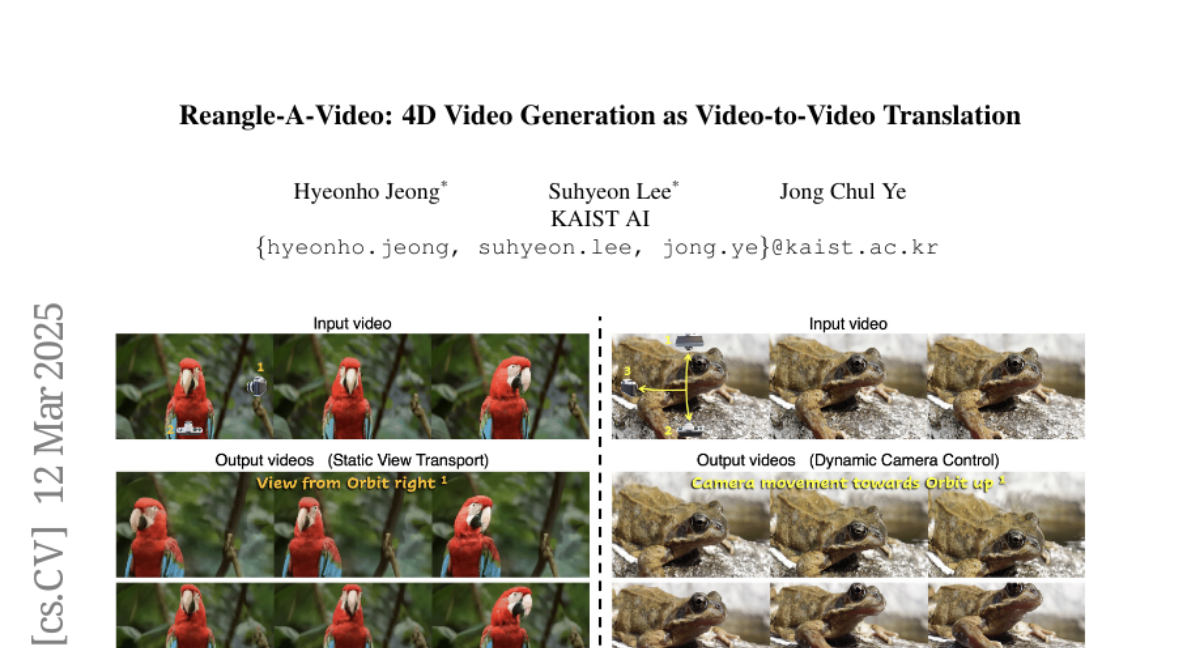

This paper talks about Reangle-A-Video, a tool that creates videos from different camera angles using just one original video, like turning a single clip into a multi-camera scene.

What's the problem?

Making videos from multiple angles usually needs lots of multi-angle footage or complex 3D models, which is expensive and time-consuming.

What's the solution?

Reangle-A-Video uses AI to learn the motion from one video and then generates new angles by adjusting the first frame to match different views, keeping everything in sync.

Why it matters?

This lets filmmakers and content creators generate multi-angle videos without reshoots, saving time and money while expanding creative options.

Abstract

We introduce Reangle-A-Video, a unified framework for generating synchronized multi-view videos from a single input video. Unlike mainstream approaches that train multi-view video diffusion models on large-scale 4D datasets, our method reframes the multi-view video generation task as video-to-videos translation, leveraging publicly available image and video diffusion priors. In essence, Reangle-A-Video operates in two stages. (1) Multi-View Motion Learning: An image-to-video diffusion transformer is synchronously fine-tuned in a self-supervised manner to distill view-invariant motion from a set of warped videos. (2) Multi-View Consistent Image-to-Images Translation: The first frame of the input video is warped and inpainted into various camera perspectives under an inference-time cross-view consistency guidance using DUSt3R, generating multi-view consistent starting images. Extensive experiments on static view transport and dynamic camera control show that Reangle-A-Video surpasses existing methods, establishing a new solution for multi-view video generation. We will publicly release our code and data. Project page: https://hyeonho99.github.io/reangle-a-video/