ReasonGen-R1: CoT for Autoregressive Image generation models through SFT and RL

Yu Zhang, Yunqi Li, Yifan Yang, Rui Wang, Yuqing Yang, Dai Qi, Jianmin Bao, Dongdong Chen, Chong Luo, Lili Qiu

2025-06-02

Summary

This paper talks about ReasonGen-R1, a new method that helps AI models generate better images by teaching them to think step-by-step using text and then rewarding them for making good images.

What's the problem?

The problem is that most image generation models just try to create pictures all at once without really thinking through the process, which can lead to less creative or less accurate results.

What's the solution?

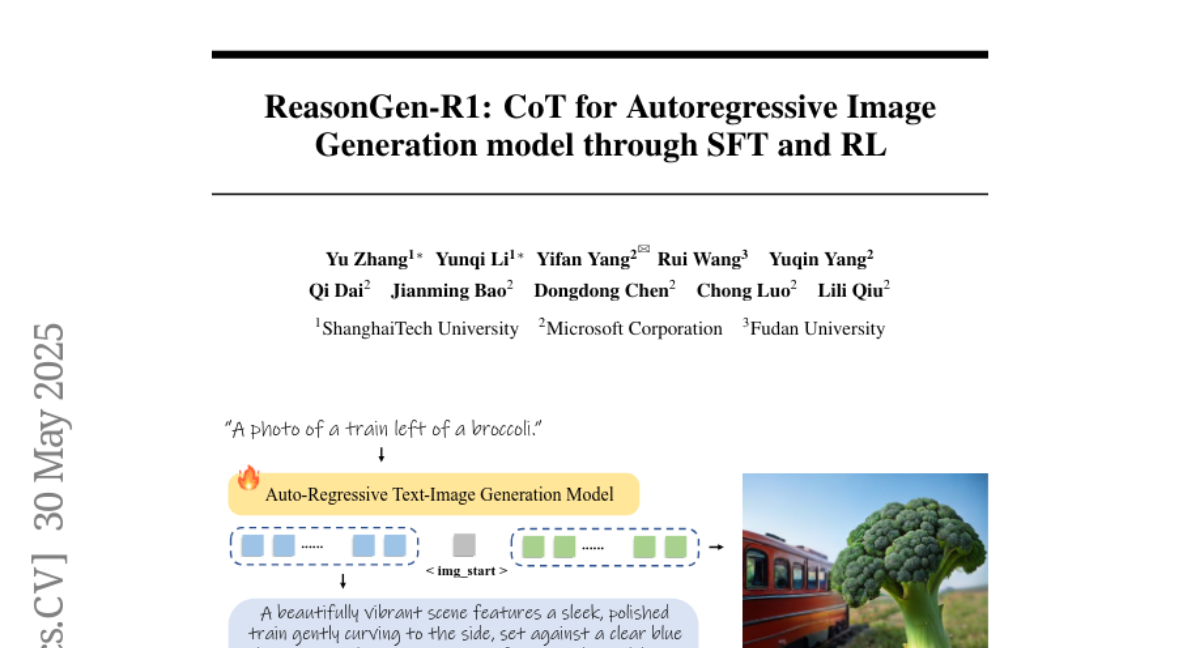

The researchers designed a two-step process where the model first learns to explain its thinking as it creates images, using something called chain-of-thought reasoning. Then, they use reinforcement learning, which means the model gets rewards for making better and more thoughtful images, helping it improve even more.

Why it matters?

This is important because it means AI can make images that are not only higher quality but also more creative and accurate, which is useful for art, design, education, and any field that needs smart image generation.

Abstract

A two-stage framework ReasonGen-R1 integrates chain-of-thought reasoning and reinforcement learning to enhance image generation by imbuing models with text-based thinking skills and refining outputs through reward optimization.