ReCamMaster: Camera-Controlled Generative Rendering from A Single Video

Jianhong Bai, Menghan Xia, Xiao Fu, Xintao Wang, Lianrui Mu, Jinwen Cao, Zuozhu Liu, Haoji Hu, Xiang Bai, Pengfei Wan, Di Zhang

2025-03-17

Summary

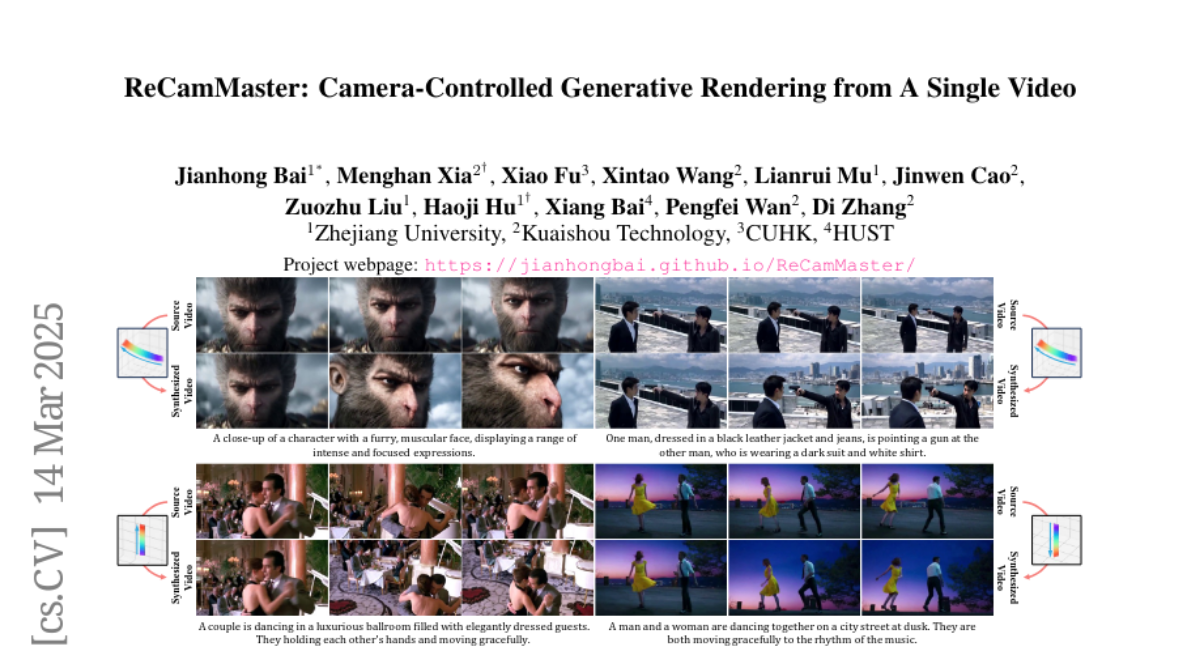

This paper introduces ReCamMaster, a system that allows you to change the camera angles in a video, even if you only have one video to start with.

What's the problem?

Changing camera angles in a video is difficult, especially if you want to maintain the appearance and motion of everything in the scene. Existing methods don't fully explore the potential of AI to solve this problem.

What's the solution?

ReCamMaster uses pre-trained AI models to recreate the video from different camera angles. The system is trained on a large dataset of videos with different camera movements to ensure it can handle various real-world scenarios.

Why it matters?

This work matters because it enables new possibilities in video creation, such as video stabilization, super-resolution, and expanding the video beyond its original boundaries.

Abstract

Camera control has been actively studied in text or image conditioned video generation tasks. However, altering camera trajectories of a given video remains under-explored, despite its importance in the field of video creation. It is non-trivial due to the extra constraints of maintaining multiple-frame appearance and dynamic synchronization. To address this, we present ReCamMaster, a camera-controlled generative video re-rendering framework that reproduces the dynamic scene of an input video at novel camera trajectories. The core innovation lies in harnessing the generative capabilities of pre-trained text-to-video models through a simple yet powerful video conditioning mechanism -- its capability often overlooked in current research. To overcome the scarcity of qualified training data, we construct a comprehensive multi-camera synchronized video dataset using Unreal Engine 5, which is carefully curated to follow real-world filming characteristics, covering diverse scenes and camera movements. It helps the model generalize to in-the-wild videos. Lastly, we further improve the robustness to diverse inputs through a meticulously designed training strategy. Extensive experiments tell that our method substantially outperforms existing state-of-the-art approaches and strong baselines. Our method also finds promising applications in video stabilization, super-resolution, and outpainting. Project page: https://jianhongbai.github.io/ReCamMaster/