RedOne: Revealing Domain-specific LLM Post-Training in Social Networking Services

Fei Zhao, Chonggang Lu, Yue Wang, Zheyong Xie, Ziyan Liu, Haofu Qian, JianZhao Huang, Fangcheng Shi, Zijie Meng, Hongcheng Guo, Mingqian He, Xinze Lyu, Yiming Lu, Ziyang Xiang, Zheyu Ye, Chengqiang Lu, Zhe Xu, Yi Wu, Yao Hu, Yan Gao, Jun Fan, Xiaolong Jiang

2025-07-21

Summary

This paper talks about RedOne, a special large language model created to work really well with social networking services like social media platforms by understanding their unique language and tasks.

What's the problem?

The problem is that typical language models usually focus on just one task at a time and don’t adapt well to the new, casual, and emotional way people talk on social networks. This makes it hard to manage content and improve user interactions effectively.

What's the solution?

The authors developed RedOne using a three-step training process: first, they let it learn general knowledge about social network language, then they fine-tuned it on specific tasks like understanding posts and matching content, and finally, they trained it to align better with what users prefer. They used a large dataset from real social media to make it more accurate and versatile.

Why it matters?

This matters because RedOne helps platforms handle harmful content better, improves how users find and interact with information, and makes social media experiences safer and more enjoyable.

Abstract

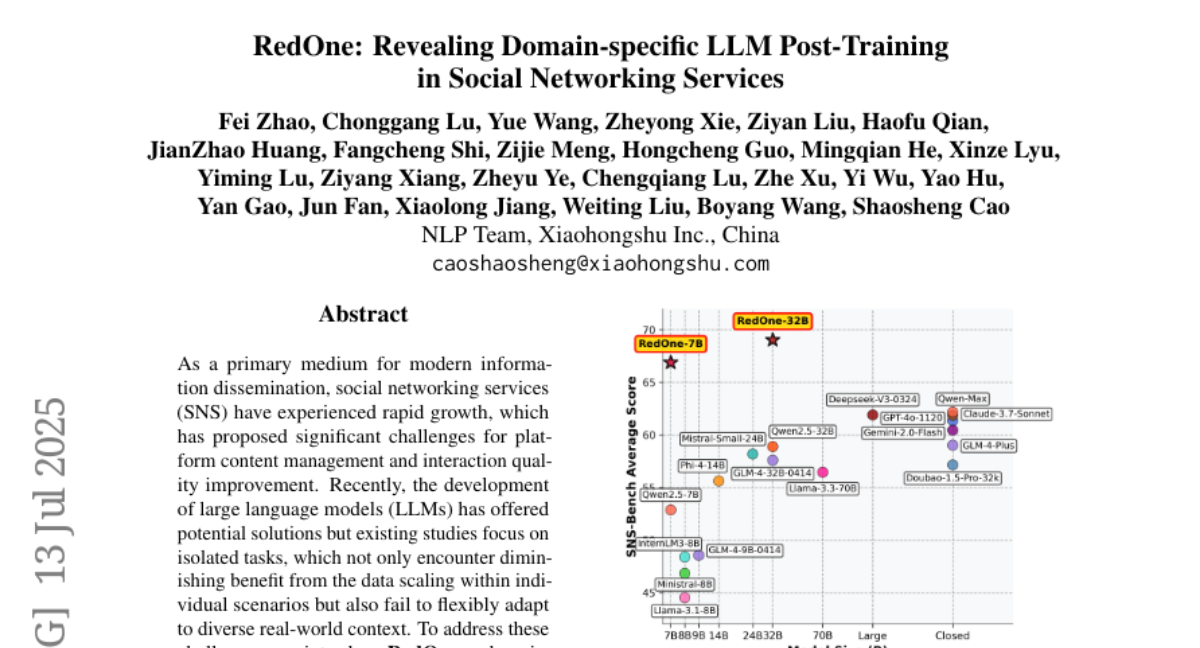

RedOne, a domain-specific LLM, enhances performance across multiple SNS tasks through a three-stage training strategy, improving generalization and reducing harmful content exposure.