Referring to Any Person

Qing Jiang, Lin Wu, Zhaoyang Zeng, Tianhe Ren, Yuda Xiong, Yihao Chen, Qin Liu, Lei Zhang

2025-03-12

Summary

This paper talks about a new AI tool called RexSeek that can find specific people in images using text descriptions, even when multiple people are present.

What's the problem?

Current AI models struggle to find the right person in a crowd when given a text description because they’re trained on simple examples with only one person to identify.

What's the solution?

Researchers created a new dataset (HumanRef) with complex real-world scenes and built RexSeek, which combines language understanding with object detection to handle multiple people and tricky descriptions.

Why it matters?

This helps security systems, photo apps, or assistive tech find people more accurately in busy scenes, like spotting a missing person in a crowd or tagging friends in group photos.

Abstract

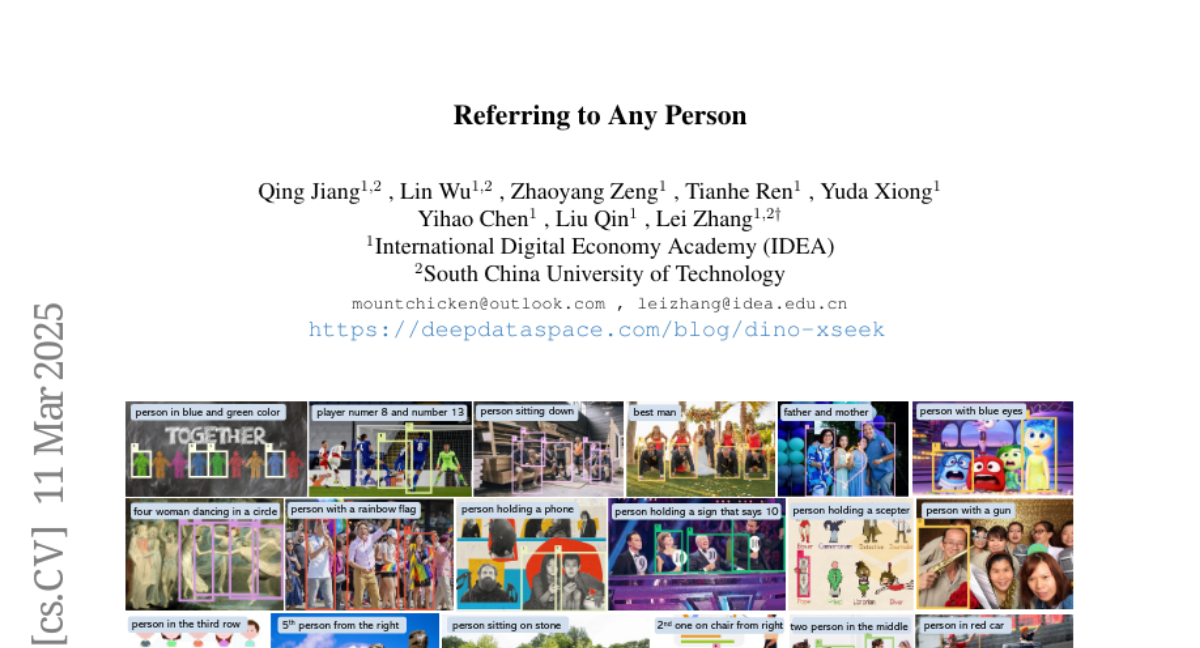

Humans are undoubtedly the most important participants in computer vision, and the ability to detect any individual given a natural language description, a task we define as referring to any person, holds substantial practical value. However, we find that existing models generally fail to achieve real-world usability, and current benchmarks are limited by their focus on one-to-one referring, that hinder progress in this area. In this work, we revisit this task from three critical perspectives: task definition, dataset design, and model architecture. We first identify five aspects of referable entities and three distinctive characteristics of this task. Next, we introduce HumanRef, a novel dataset designed to tackle these challenges and better reflect real-world applications. From a model design perspective, we integrate a multimodal large language model with an object detection framework, constructing a robust referring model named RexSeek. Experimental results reveal that state-of-the-art models, which perform well on commonly used benchmarks like RefCOCO/+/g, struggle with HumanRef due to their inability to detect multiple individuals. In contrast, RexSeek not only excels in human referring but also generalizes effectively to common object referring, making it broadly applicable across various perception tasks. Code is available at https://github.com/IDEA-Research/RexSeek