ReflAct: World-Grounded Decision Making in LLM Agents via Goal-State Reflection

Jeonghye Kim, Sojeong Rhee, Minbeom Kim, Dohyung Kim, Sangmook Lee, Youngchul Sung, Kyomin Jung

2025-05-26

Summary

This paper talks about ReflAct, a new method that helps large language model agents make better decisions by constantly checking if their actions are leading them toward their goals and staying true to reality.

What's the problem?

The problem is that AI agents sometimes lose track of what they're supposed to accomplish or start making things up, which is called hallucinating, especially when they have to make a lot of decisions in a row.

What's the solution?

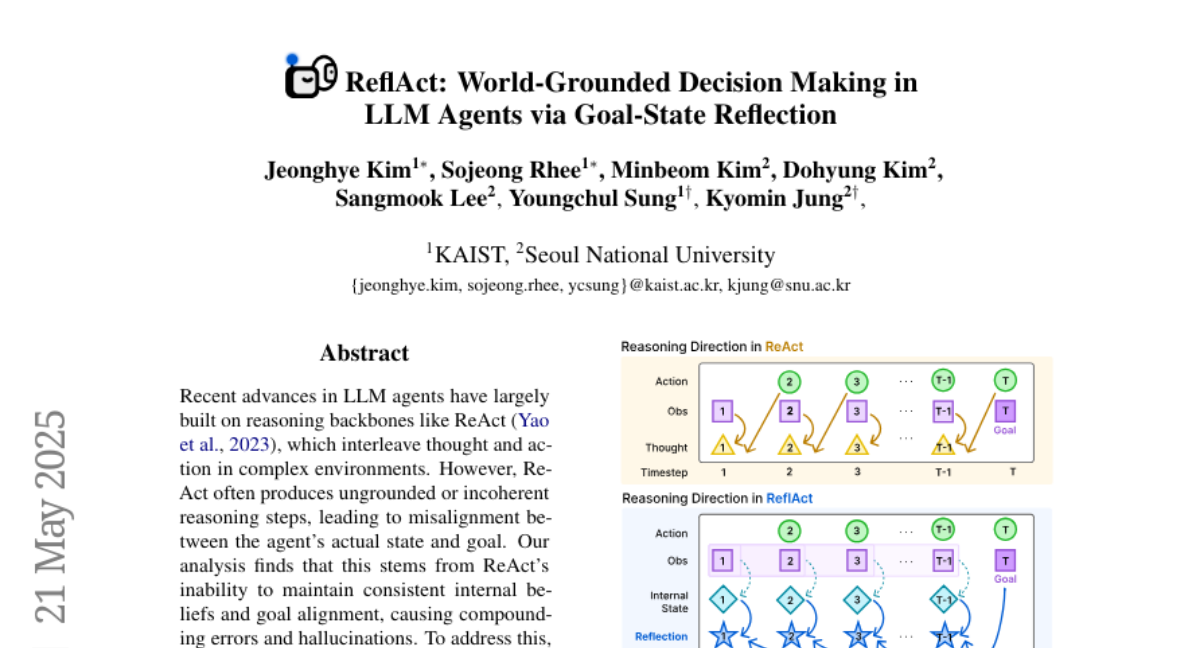

The researchers developed ReflAct, a system where the AI regularly reflects on its current state and progress toward its goal. This helps the agent stay focused on what it needs to do and avoid making up information, making it more reliable than previous methods like ReAct.

Why it matters?

This is important because it means AI agents can be trusted more to follow instructions and avoid mistakes, which is crucial for real-world tasks where accuracy and goal achievement really matter.

Abstract

ReflAct, a new reasoning backbone for LLM agents, improves goal alignment and reduces hallucinations by continuously reflecting on the agent's state, surpassing ReAct and other enhanced variants.