RefVNLI: Towards Scalable Evaluation of Subject-driven Text-to-image Generation

Aviv Slobodkin, Hagai Taitelbaum, Yonatan Bitton, Brian Gordon, Michal Sokolik, Nitzan Bitton Guetta, Almog Gueta, Royi Rassin, Itay Laish, Dani Lischinski, Idan Szpektor

2025-04-25

Summary

This paper talks about RefVNLI, a new way to judge how well AI models can create images from text descriptions, especially when the image needs to match a specific subject mentioned in the text.

What's the problem?

The problem is that current methods for checking if AI-generated images really match the text and keep the important details about the main subject aren't very accurate or reliable. This makes it hard to know how good these models really are, especially when the subject in the text is very specific or unique.

What's the solution?

The researchers invented RefVNLI, a special scoring system that checks both if the image fits the text and if it keeps the main subject looking right. They tested it on lots of different categories and found that it does a better job than older methods at spotting when an image truly matches what the text asked for.

Why it matters?

This matters because it helps researchers and developers build better AI that can create images that are both accurate and faithful to what people actually want, making these tools more useful for art, design, and creative projects.

Abstract

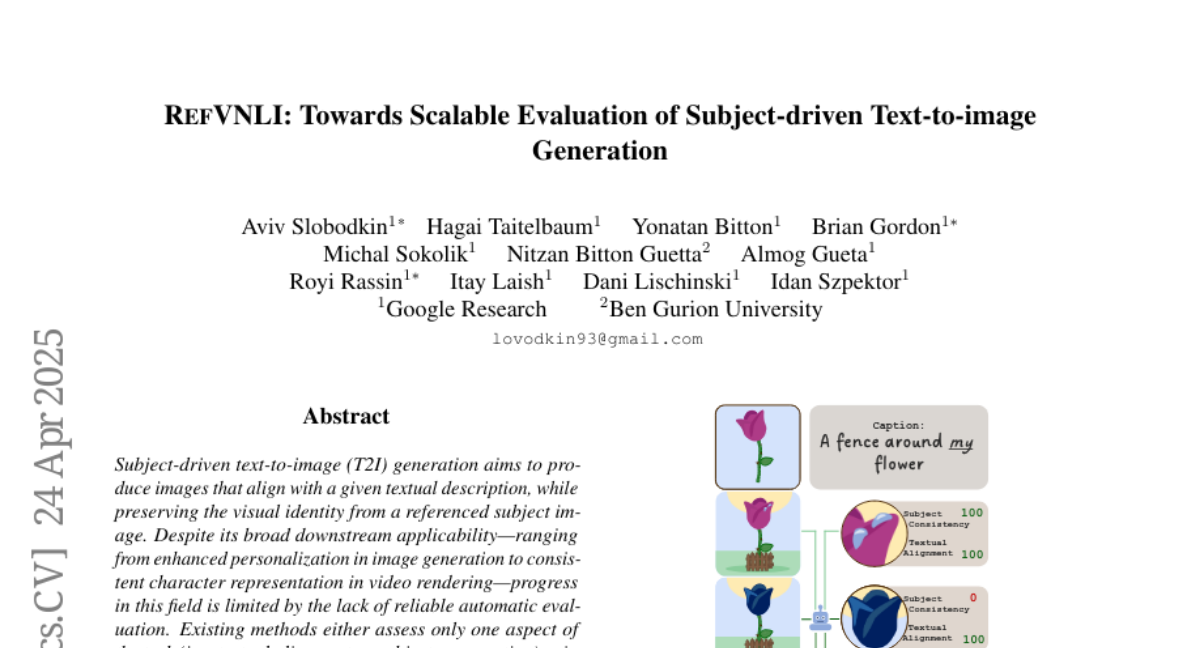

RefVNLI, a novel metric, evaluates both textual alignment and subject preservation in subject-driven text-to-image generation, outperforming existing methods across various benchmarks and subject categories.