Rep-MTL: Unleashing the Power of Representation-level Task Saliency for Multi-Task Learning

Zedong Wang, Siyuan Li, Dan Xu

2025-07-29

Summary

This paper talks about Rep-MTL, a method that improves how machines learn to do several tasks at once by focusing on the most important parts of their internal knowledge for each task.

What's the problem?

The problem is that when a machine tries to learn many tasks together, sometimes the tasks interfere with each other, making the machine worse at some of them. This happens because the machine can get confused about which information should be used for which task.

What's the solution?

Rep-MTL solves this by looking at the representation level, which means it studies the way the machine represents knowledge inside itself, and finds which parts are most important or relevant for each task. This helps the machine share useful knowledge between tasks without mixing things up, so the tasks support each other rather than harm one another.

Why it matters?

This matters because being able to learn multiple tasks well at the same time makes AI systems more powerful, flexible, and efficient, which means they can solve more problems faster and with less data.

Abstract

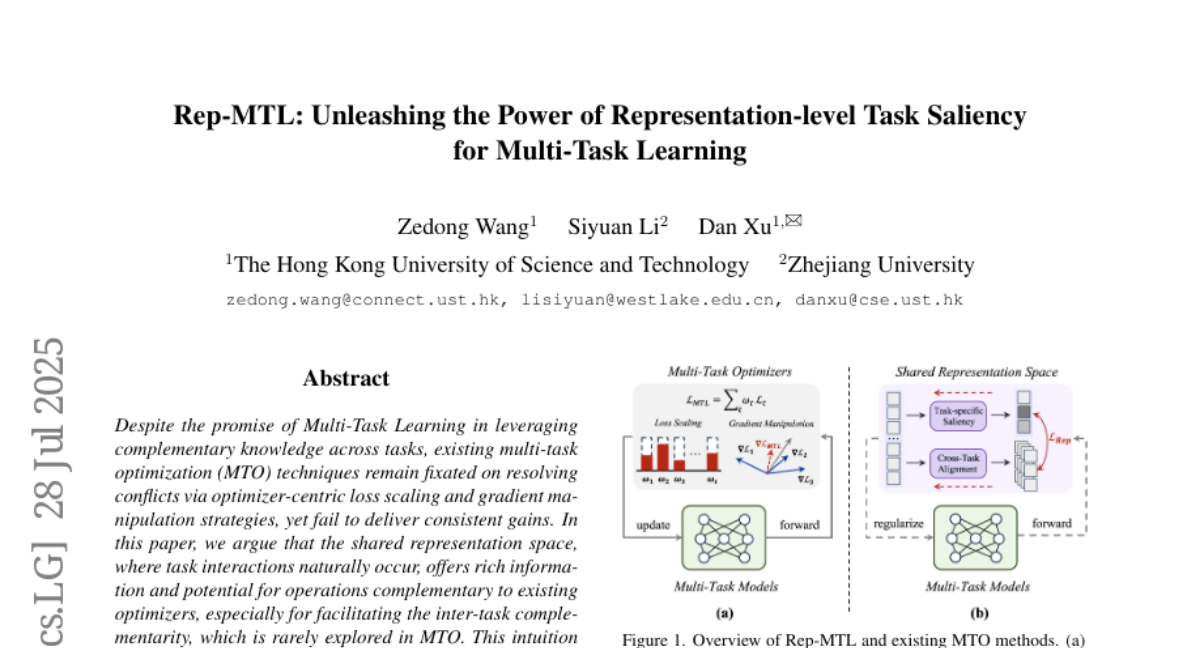

Rep-MTL enhances multi-task learning by leveraging representation-level saliency to promote task complementarity and mitigate negative transfer.