Rethinking Image Evaluation in Super-Resolution

Shaolin Su, Josep M. Rocafort, Danna Xue, David Serrano-Lozano, Lei Sun, Javier Vazquez-Corral

2025-03-25

Summary

This paper talks about how we measure the quality of AI-generated images, especially when trying to make low-resolution images look like high-resolution ones.

What's the problem?

The ways we currently measure image quality might not be accurate because the high-resolution images we use as references aren't always perfect. This can lead us to think that some AI techniques are better than they actually are.

What's the solution?

The researchers analyzed existing methods and found that the quality of the reference images does affect the results. They also created a new way to measure image quality that takes into account the potential problems with the reference images.

Why it matters?

This work matters because it helps us develop better ways to evaluate AI-generated images, which can lead to more accurate comparisons and better AI techniques overall.

Abstract

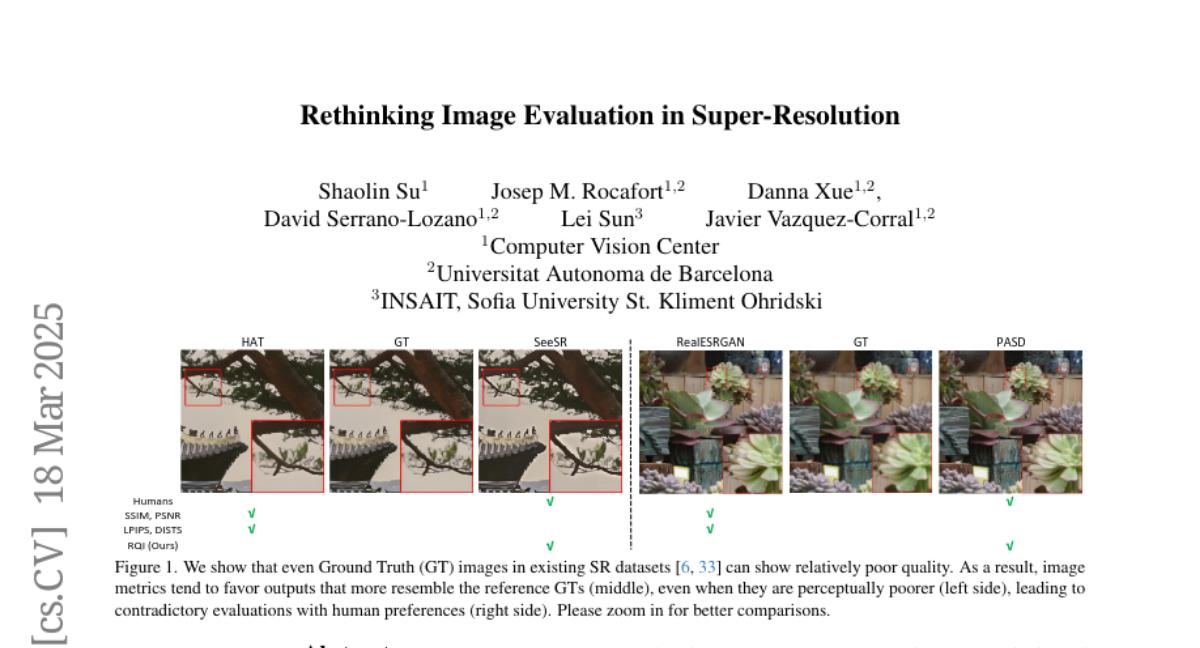

While recent advancing image super-resolution (SR) techniques are continually improving the perceptual quality of their outputs, they can usually fail in quantitative evaluations. This inconsistency leads to a growing distrust in existing image metrics for SR evaluations. Though image evaluation depends on both the metric and the reference ground truth (GT), researchers typically do not inspect the role of GTs, as they are generally accepted as `perfect' references. However, due to the data being collected in the early years and the ignorance of controlling other types of distortions, we point out that GTs in existing SR datasets can exhibit relatively poor quality, which leads to biased evaluations. Following this observation, in this paper, we are interested in the following questions: Are GT images in existing SR datasets 100% trustworthy for model evaluations? How does GT quality affect this evaluation? And how to make fair evaluations if there exist imperfect GTs? To answer these questions, this paper presents two main contributions. First, by systematically analyzing seven state-of-the-art SR models across three real-world SR datasets, we show that SR performances can be consistently affected across models by low-quality GTs, and models can perform quite differently when GT quality is controlled. Second, we propose a novel perceptual quality metric, Relative Quality Index (RQI), that measures the relative quality discrepancy of image pairs, thus issuing the biased evaluations caused by unreliable GTs. Our proposed model achieves significantly better consistency with human opinions. We expect our work to provide insights for the SR community on how future datasets, models, and metrics should be developed.