Rethinking RGB-Event Semantic Segmentation with a Novel Bidirectional Motion-enhanced Event Representation

Zhen Yao, Xiaowen Ying, Mooi Choo Chuah

2025-05-06

Summary

This paper talks about a new way to help AI better understand and label what's happening in videos by combining regular color images with special event data, using improved methods to handle motion and timing.

What's the problem?

AI often struggles to accurately identify objects and actions in videos when it tries to combine information from both regular video and event sensors, because the timing and movement details can get mixed up or misaligned.

What's the solution?

The researchers created a new system that uses advanced motion tracking and combines information from both directions in time, so the AI can line up all the details more accurately and make better sense of what’s happening in each frame.

Why it matters?

This matters because it leads to smarter video analysis, which can help with things like self-driving cars, security cameras, and any technology that needs to quickly and accurately understand what’s happening in the real world.

Abstract

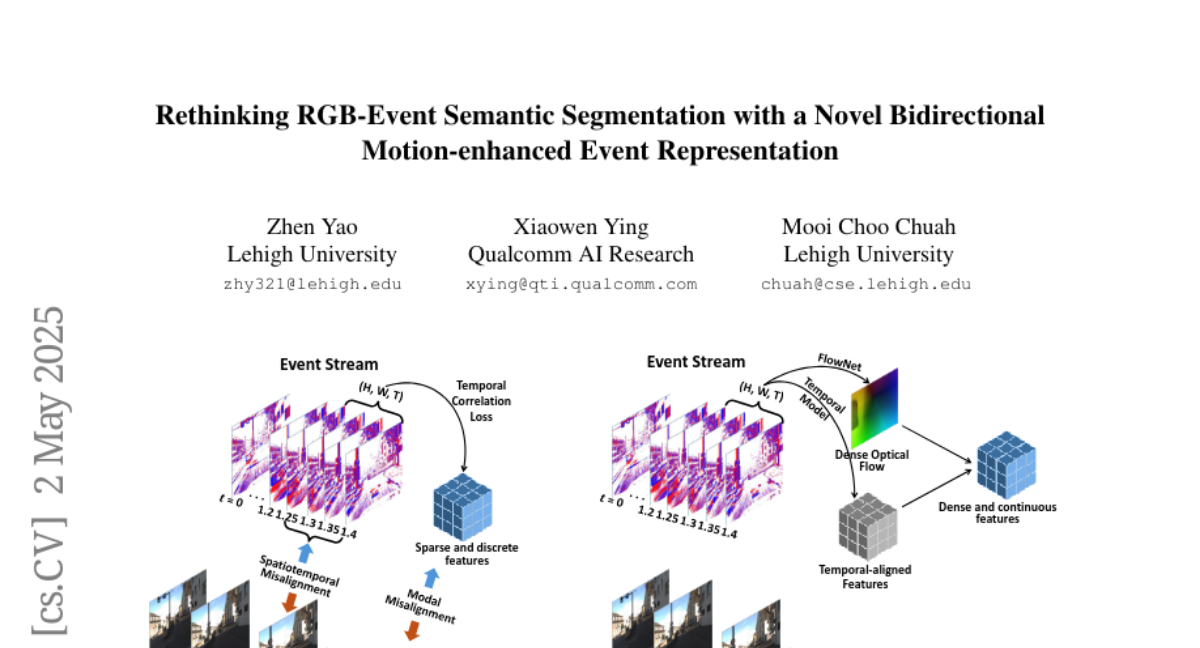

A novel event representation and fusion framework enhances RGB-Event semantic segmentation by addressing temporal, spatial, and modal misalignments using dense optical flows and bidirectional flow aggregation.