Reverse Preference Optimization for Complex Instruction Following

Xiang Huang, Ting-En Lin, Feiteng Fang, Yuchuan Wu, Hangyu Li, Yuzhong Qu, Fei Huang, Yongbin Li

2025-05-28

Summary

This paper talks about a new method called Reverse Preference Optimization (RPO) that helps AI models get better at following complicated instructions by changing the way they learn what people prefer.

What's the problem?

The problem is that large language models often have trouble understanding and following complex instructions, especially when there are lots of rules or preferences involved. This can lead to the AI making mistakes or not giving the answers people actually want.

What's the solution?

To solve this, the researchers introduced RPO, which works by flipping or reversing some of the instruction rules during training. This helps the AI learn to pick out which preferences really matter and makes it better at handling tricky or detailed instructions.

Why it matters?

This is important because if AI can follow complex instructions more accurately, it will be much more helpful in real-world situations, like assisting with homework, helping at work, or even just making conversations smoother and more useful.

Abstract

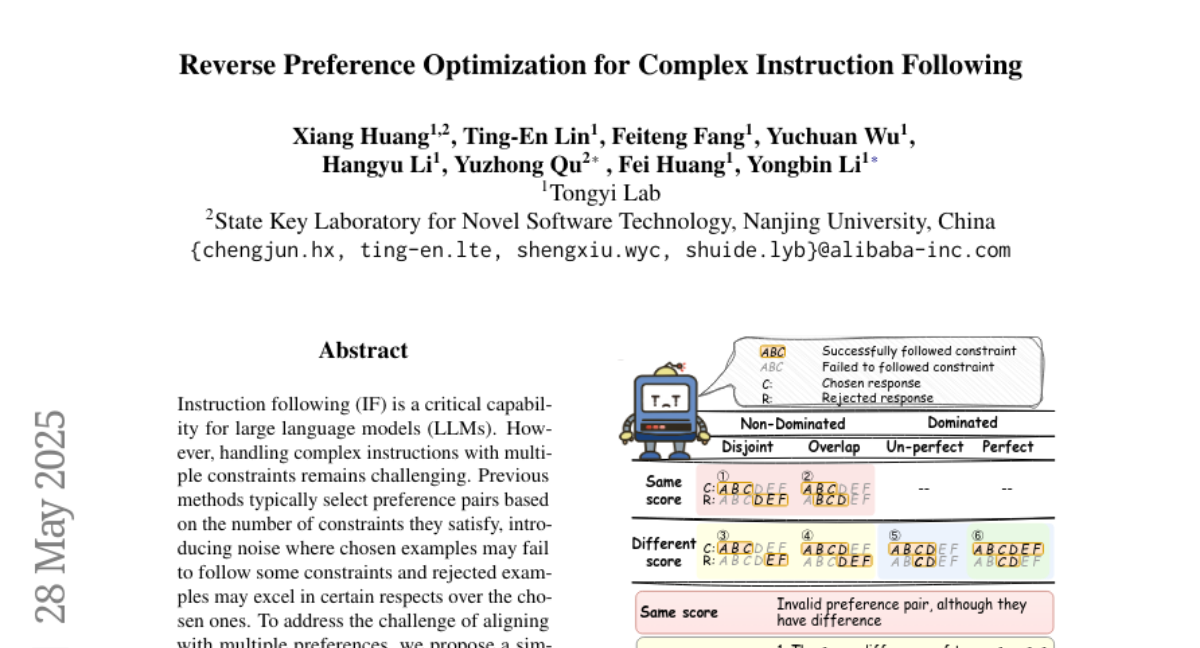

Reverse Preference Optimization (RPO) dynamically reverses instruction constraints to improve preference pair selection and optimize instruction following for large language models.