Revisiting Image Fusion for Multi-Illuminant White-Balance Correction

David Serrano-Lozano, Aditya Arora, Luis Herranz, Konstantinos G. Derpanis, Michael S. Brown, Javier Vazquez-Corral

2025-03-25

Summary

This paper is about improving how computers correct the color balance in photos when there are different light sources in the same scene.

What's the problem?

Current methods that try to fix color balance by combining different versions of the image don't work very well, and there's a lack of good training data for these situations.

What's the solution?

The researchers created a new AI model that's better at understanding how light affects colors in different parts of the image. They also created a large dataset of images with multiple light sources to train and test their model.

Why it matters?

This work matters because it can lead to better-looking photos in situations where the lighting is complex, which is common in everyday life.

Abstract

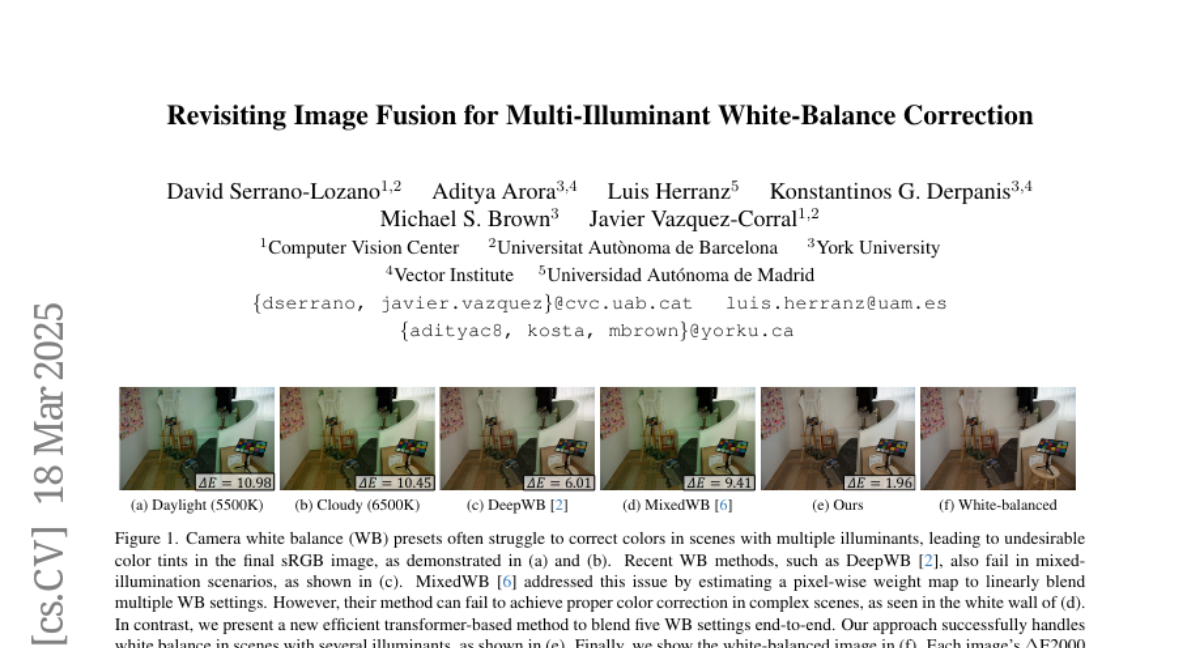

White balance (WB) correction in scenes with multiple illuminants remains a persistent challenge in computer vision. Recent methods explored fusion-based approaches, where a neural network linearly blends multiple sRGB versions of an input image, each processed with predefined WB presets. However, we demonstrate that these methods are suboptimal for common multi-illuminant scenarios. Additionally, existing fusion-based methods rely on sRGB WB datasets lacking dedicated multi-illuminant images, limiting both training and evaluation. To address these challenges, we introduce two key contributions. First, we propose an efficient transformer-based model that effectively captures spatial dependencies across sRGB WB presets, substantially improving upon linear fusion techniques. Second, we introduce a large-scale multi-illuminant dataset comprising over 16,000 sRGB images rendered with five different WB settings, along with WB-corrected images. Our method achieves up to 100\% improvement over existing techniques on our new multi-illuminant image fusion dataset.