Rewards Are Enough for Fast Photo-Realistic Text-to-image Generation

Yihong Luo, Tianyang Hu, Weijian Luo, Kenji Kawaguchi, Jing Tang

2025-03-18

Summary

This paper introduces R0, a new way to create realistic images from text by focusing on 'rewards' rather than relying heavily on traditional methods.

What's the problem?

Creating images from text that match complicated descriptions and human preferences is difficult. Existing methods use complex processes that can be inefficient.

What's the solution?

R0 treats image generation as a search for images that get high 'rewards' for matching the text description. It uses new ways to set up the generator and uses special techniques to find good images efficiently, without relying on expensive traditional steps.

Why it matters?

This work matters because it shows that focusing on rewards can be a powerful way to create images from text, potentially leading to faster and more efficient image generation methods.

Abstract

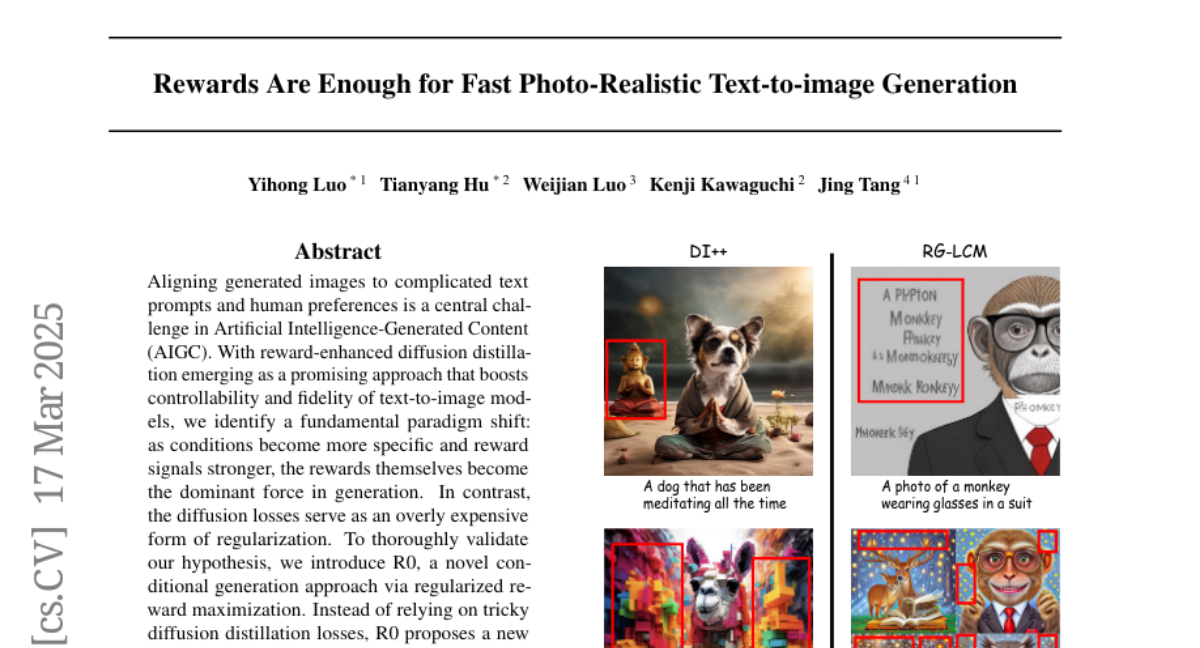

Aligning generated images to complicated text prompts and human preferences is a central challenge in Artificial Intelligence-Generated Content (AIGC). With reward-enhanced diffusion distillation emerging as a promising approach that boosts controllability and fidelity of text-to-image models, we identify a fundamental paradigm shift: as conditions become more specific and reward signals stronger, the rewards themselves become the dominant force in generation. In contrast, the diffusion losses serve as an overly expensive form of regularization. To thoroughly validate our hypothesis, we introduce R0, a novel conditional generation approach via regularized reward maximization. Instead of relying on tricky diffusion distillation losses, R0 proposes a new perspective that treats image generations as an optimization problem in data space which aims to search for valid images that have high compositional rewards. By innovative designs of the generator parameterization and proper regularization techniques, we train state-of-the-art few-step text-to-image generative models with R0 at scales. Our results challenge the conventional wisdom of diffusion post-training and conditional generation by demonstrating that rewards play a dominant role in scenarios with complex conditions. We hope our findings can contribute to further research into human-centric and reward-centric generation paradigms across the broader field of AIGC. Code is available at https://github.com/Luo-Yihong/R0.