Riddle Me This! Stealthy Membership Inference for Retrieval-Augmented Generation

Ali Naseh, Yuefeng Peng, Anshuman Suri, Harsh Chaudhari, Alina Oprea, Amir Houmansadr

2025-02-06

Summary

This paper talks about a method called Interrogation Attack (IA), which is designed to figure out if specific documents are part of the database used by Retrieval-Augmented Generation (RAG) systems. It does this stealthily, using natural queries that are hard to detect as malicious.

What's the problem?

RAG systems use external databases to improve the accuracy of AI-generated responses, but this creates privacy risks. Attackers can exploit these systems to infer whether certain sensitive documents are in the database, potentially exposing private information. Existing methods for such attacks rely on unnatural queries that are easier to detect and block.

What's the solution?

The researchers developed IA, which uses carefully crafted natural-text queries that only make sense if the target document is in the database. This approach is harder to detect compared to previous methods and works efficiently with fewer queries. IA improves inference accuracy while keeping costs low, making it a more effective tool for testing privacy vulnerabilities in RAG systems.

Why it matters?

This research is important because it highlights privacy risks in RAG systems and provides insights into how these vulnerabilities can be exploited or defended against. By understanding these risks, developers can create safer AI systems that protect sensitive data while still delivering accurate and reliable responses.

Abstract

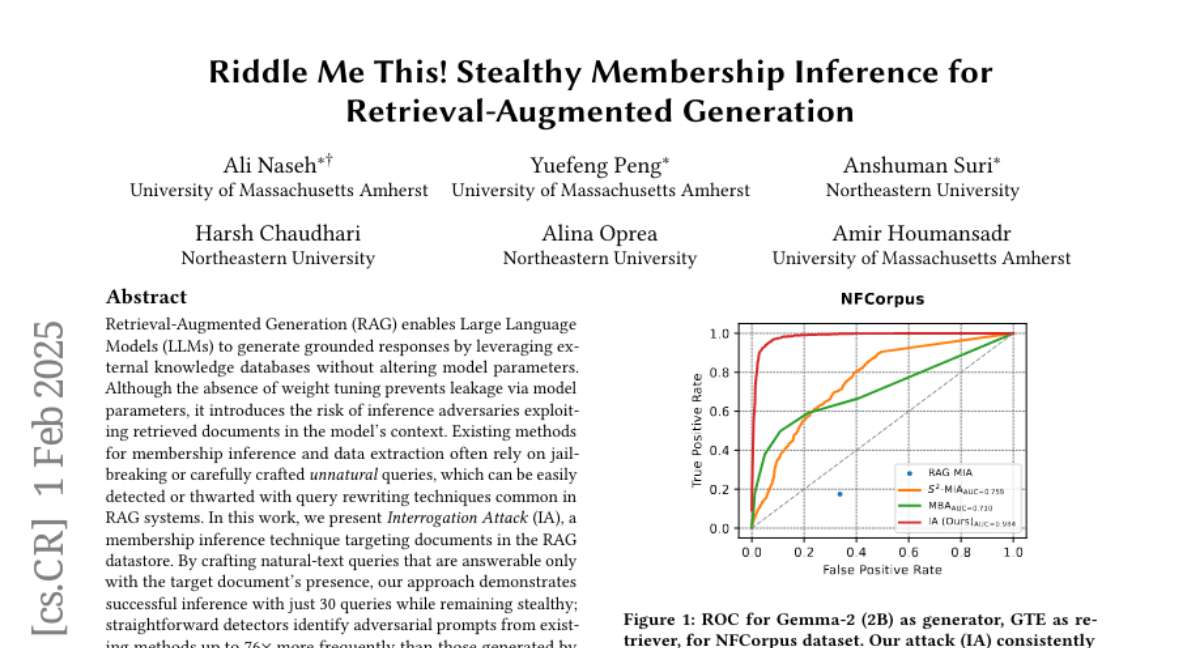

Retrieval-Augmented Generation (RAG) enables Large Language Models (LLMs) to generate grounded responses by leveraging external knowledge databases without altering model parameters. Although the absence of weight tuning prevents leakage via model parameters, it introduces the risk of inference adversaries exploiting retrieved documents in the model's context. Existing methods for membership inference and data extraction often rely on jailbreaking or carefully crafted unnatural queries, which can be easily detected or thwarted with query rewriting techniques common in RAG systems. In this work, we present Interrogation Attack (IA), a membership inference technique targeting documents in the RAG datastore. By crafting natural-text queries that are answerable only with the target document's presence, our approach demonstrates successful inference with just 30 queries while remaining stealthy; straightforward detectors identify adversarial prompts from existing methods up to ~76x more frequently than those generated by our attack. We observe a 2x improvement in TPR@1%FPR over prior inference attacks across diverse RAG configurations, all while costing less than $0.02 per document inference.