Right Side Up? Disentangling Orientation Understanding in MLLMs with Fine-grained Multi-axis Perception Tasks

Keanu Nichols, Nazia Tasnim, Yan Yuting, Nicholas Ikechukwu, Elva Zou, Deepti Ghadiyaram, Bryan Plummer

2025-05-29

Summary

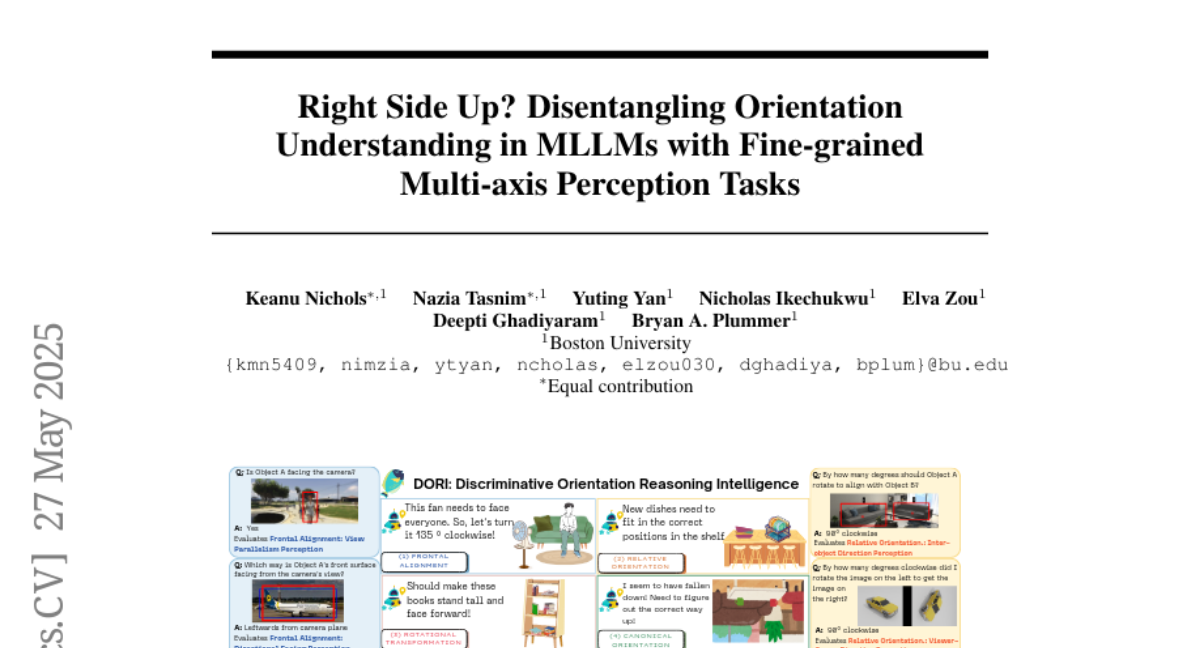

This paper talks about DORI, a new way to test how well AI models that work with both images and language can figure out which way objects are facing, especially when those objects are turned or flipped in complicated ways.

What's the problem?

The problem is that current AI models often struggle to understand the exact orientation of objects in pictures, especially if the objects are rotated in more than one direction or if the point of view changes. This makes it hard for these models to give accurate answers or descriptions about what they see.

What's the solution?

The researchers created special tests that challenge these AI models with objects that are rotated along different axes and from different viewpoints. By using these fine-grained tasks, they were able to show where the AI models mess up and why they have trouble keeping track of how objects are positioned.

Why it matters?

This is important because understanding object orientation is a basic skill for many real-world applications, like robotics, self-driving cars, and virtual reality. Knowing where AI models fail helps researchers improve them, making future technology safer and more reliable.

Abstract

DORI evaluates object orientation perception in vision-language models, revealing critical limitations in their ability to accurately estimate and track orientations, particularly with reference frame shifts and compound rotations.