RobustDexGrasp: Robust Dexterous Grasping of General Objects from Single-view Perception

Hui Zhang, Zijian Wu, Linyi Huang, Sammy Christen, Jie Song

2025-04-10

Summary

This paper talks about a smart AI system that teaches robot hands to grab all kinds of objects in messy real-world situations, even when the robot can’t see the whole object or things keep moving around.

What's the problem?

Robots usually need perfect views of objects, expert training, or fixed plans to grab things, making them struggle with new objects or unexpected bumps.

What's the solution?

The system uses a mix of practice drills (like copying experts and adapting to random challenges) and focuses on the parts of objects that matter most for grabbing, helping robots adjust to surprises like shifts or pushes.

Why it matters?

This helps robots work better in homes, factories, or hospitals, where they need to handle random objects or recover from bumps without constant human help.

Abstract

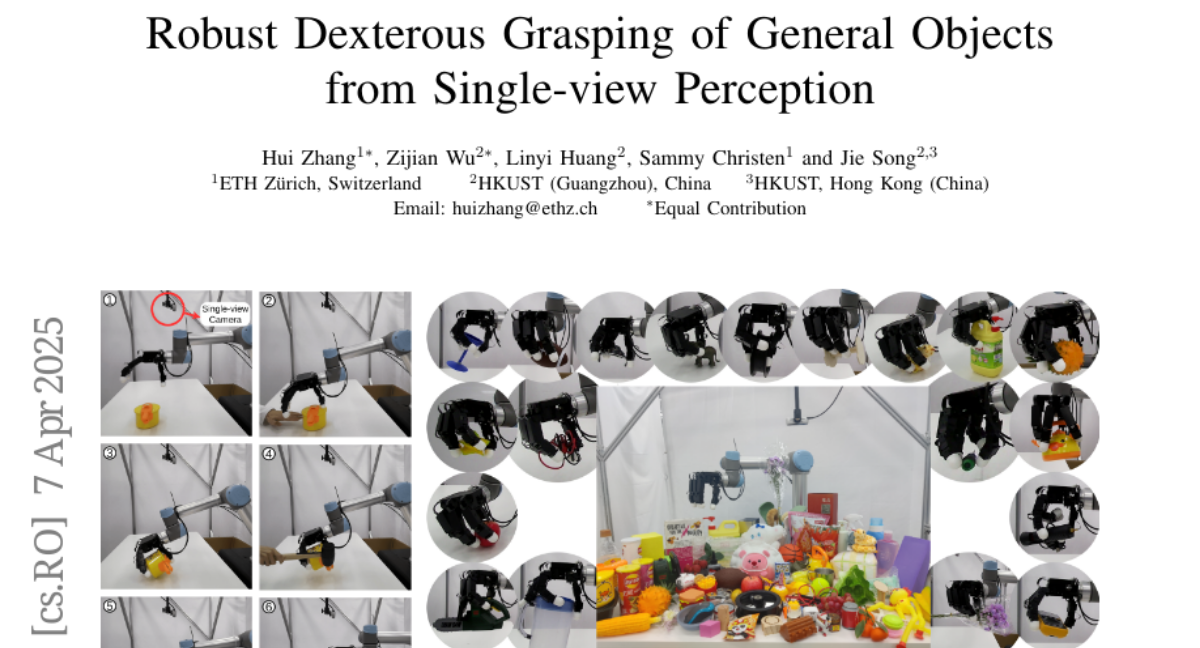

Robust grasping of various objects from single-view perception is fundamental for dexterous robots. Previous works often rely on fully observable objects, expert demonstrations, or static grasping poses, which restrict their generalization ability and adaptability to external disturbances. In this paper, we present a reinforcement-learning-based framework that enables zero-shot dynamic dexterous grasping of a wide range of unseen objects from single-view perception, while performing adaptive motions to external disturbances. We utilize a hand-centric object representation for shape feature extraction that emphasizes interaction-relevant local shapes, enhancing robustness to shape variance and uncertainty. To enable effective hand adaptation to disturbances with limited observations, we propose a mixed curriculum learning strategy, which first utilizes imitation learning to distill a policy trained with privileged real-time visual-tactile feedback, and gradually transfers to reinforcement learning to learn adaptive motions under disturbances caused by observation noises and dynamic randomization. Our experiments demonstrate strong generalization in grasping unseen objects with random poses, achieving success rates of 97.0% across 247,786 simulated objects and 94.6% across 512 real objects. We also demonstrate the robustness of our method to various disturbances, including unobserved object movement and external forces, through both quantitative and qualitative evaluations. Project Page: https://zdchan.github.io/Robust_DexGrasp/