RoPECraft: Training-Free Motion Transfer with Trajectory-Guided RoPE Optimization on Diffusion Transformers

Ahmet Berke Gokmen, Yigit Ekin, Bahri Batuhan Bilecen, Aysegul Dundar

2025-05-23

Summary

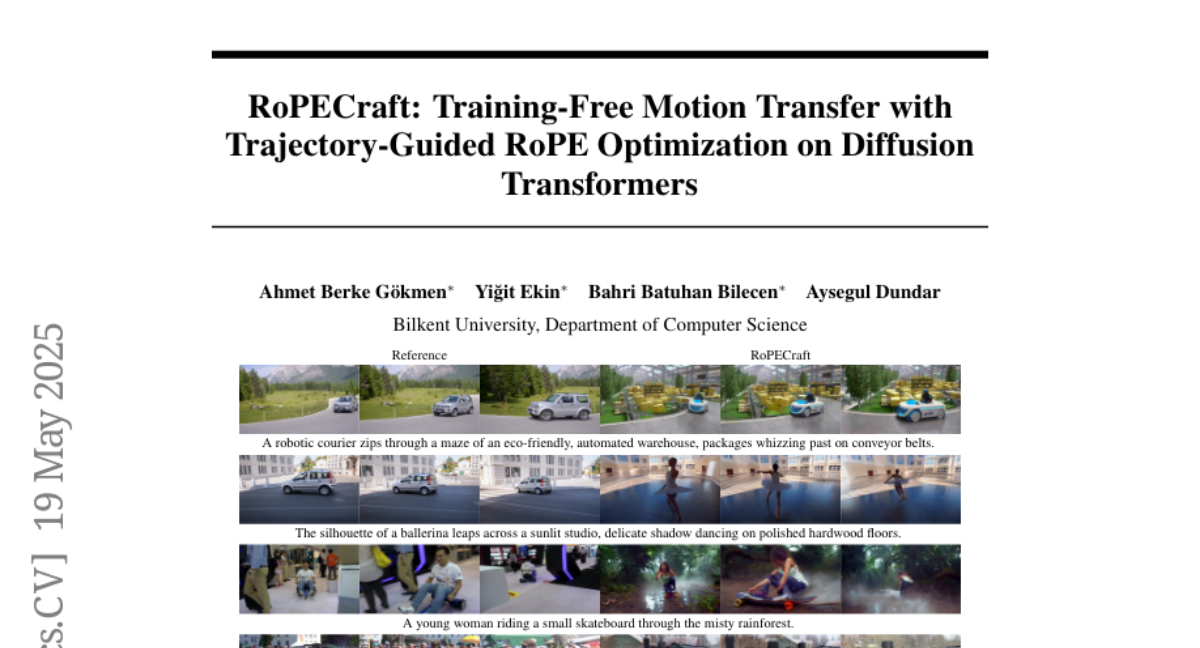

This paper talks about a new method called RoPECraft that lets AI models copy motion from one video to another without needing extra training, making it easier to create videos based on text instructions.

What's the problem?

The problem is that when you want an AI to generate videos that follow certain movements or actions, it’s hard to get the motion just right and avoid weird mistakes or visual glitches, especially without training the model all over again.

What's the solution?

The researchers came up with a way to adjust something called rotary positional embeddings inside diffusion transformers. By guiding these adjustments with the motion path from a reference video, RoPECraft helps the AI transfer the correct movement to new videos, all without retraining the model.

Why it matters?

This matters because it makes video generation much more flexible and accurate, allowing people to create high-quality, customized videos with fewer errors, which is useful for things like animation, entertainment, and creative projects.

Abstract

RoPECraft is a training-free method that modifies rotary positional embeddings in diffusion transformers to transfer motion from reference videos, enhancing text-guided video generation and reducing artifacts.