S^2R: Teaching LLMs to Self-verify and Self-correct via Reinforcement Learning

Ruotian Ma, Peisong Wang, Cheng Liu, Xingyan Liu, Jiaqi Chen, Bang Zhang, Xin Zhou, Nan Du, Jia Li

2025-02-21

Summary

This paper talks about using artificial intelligence, specifically reinforcement learning (RL), to create better quantum error-correcting codes. These codes are crucial for making quantum computers work reliably by protecting them from errors.

What's the problem?

Quantum computers are very sensitive to errors, and we need special codes to protect them. Current codes often require complex measurements that are hard to implement and can introduce more errors. We need simpler, more efficient codes, especially for near-future quantum computers that aren't very large yet.

What's the solution?

The researchers developed a new way to use reinforcement learning to design quantum error-correcting codes. Their method focuses on reducing the 'weight' of the measurements needed, which makes the codes simpler and more practical. They were able to create new codes that perform much better than existing ones, especially for smaller quantum computers that we might build in the near future.

Why it matters?

This matters because it could speed up the development of practical, fault-tolerant quantum computers. The new codes they found could reduce the number of physical qubits needed by 10 to 100 times compared to current methods. This makes it much more feasible to build useful quantum computers sooner. It also shows that AI can be a powerful tool for solving complex problems in quantum computing, potentially leading to more breakthroughs in the future.

Abstract

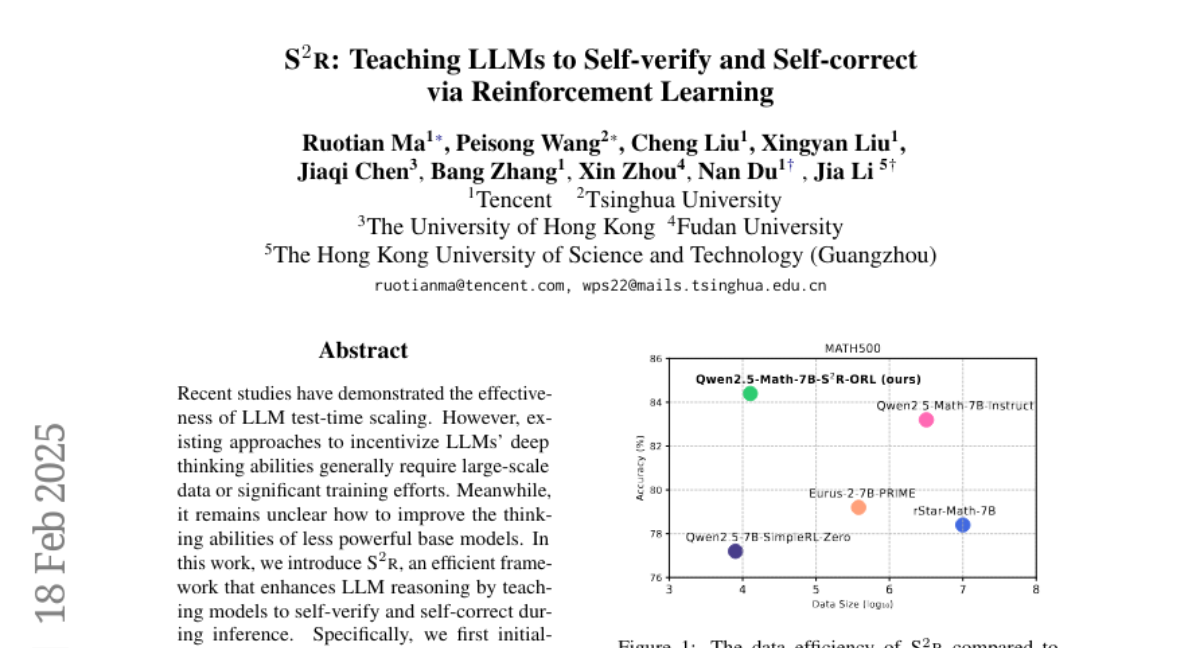

Recent studies have demonstrated the effectiveness of LLM test-time scaling. However, existing approaches to incentivize LLMs' deep thinking abilities generally require large-scale data or significant training efforts. Meanwhile, it remains unclear how to improve the thinking abilities of less powerful base models. In this work, we introduce S^2R, an efficient framework that enhances LLM reasoning by teaching models to self-verify and self-correct during inference. Specifically, we first initialize LLMs with iterative self-verification and self-correction behaviors through supervised fine-tuning on carefully curated data. The self-verification and self-correction skills are then further strengthened by both outcome-level and process-level reinforcement learning, with minimized resource requirements, enabling the model to adaptively refine its reasoning process during inference. Our results demonstrate that, with only 3.1k self-verifying and self-correcting behavior initialization samples, Qwen2.5-math-7B achieves an accuracy improvement from 51.0\% to 81.6\%, outperforming models trained on an equivalent amount of long-CoT distilled data. Extensive experiments and analysis based on three base models across both in-domain and out-of-domain benchmarks validate the effectiveness of S^2R. Our code and data are available at https://github.com/NineAbyss/S2R.