Sailing AI by the Stars: A Survey of Learning from Rewards in Post-Training and Test-Time Scaling of Large Language Models

Xiaobao Wu

2025-05-12

Summary

This paper talks about how large language models can get better at giving answers people like by learning from rewards, which means they get feedback and adjust their responses based on what works best.

What's the problem?

The problem is that even the smartest language models don't always give answers that match what people actually want or expect. Without a way to guide their responses, these models can be unhelpful or even make mistakes.

What's the solution?

The researchers reviewed different methods where language models are trained or adjusted using rewards, like reinforcement learning and special ways of picking answers based on feedback. These strategies help the models learn from experience and improve over time, making their answers more useful and aligned with human preferences.

Why it matters?

This matters because it helps create AI that is more helpful, trustworthy, and responsive to what people really need. By learning from rewards and feedback, language models can become better assistants in school, work, and everyday life.

Abstract

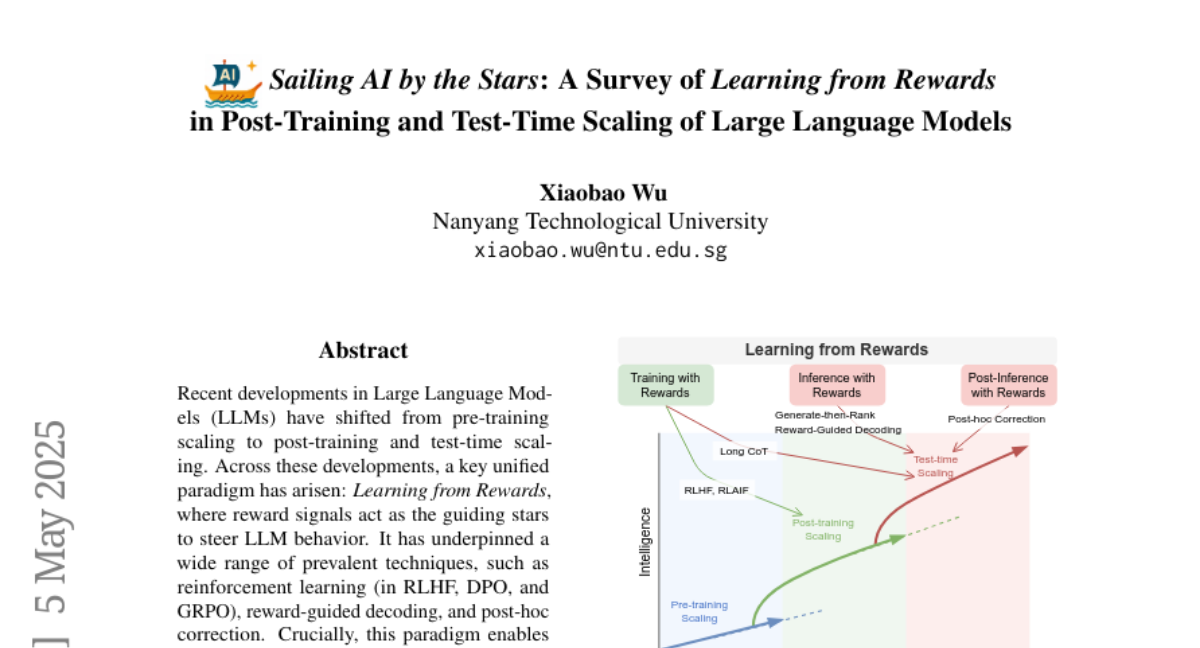

A survey of the Learning from Rewards paradigm in Large Language Models, encompassing reinforcement learning techniques and reward-guided decoding strategies, enabling dynamic feedback and aligned preferences.