SAM4D: Segment Anything in Camera and LiDAR Streams

Jianyun Xu, Song Wang, Ziqian Ni, Chunyong Hu, Sheng Yang, Jianke Zhu, Qiang Li

2025-06-27

Summary

This paper talks about SAM4D, a new AI model that helps self-driving cars recognize and separate different objects around them by using both camera images and LiDAR sensor data over time.

What's the problem?

The problem is that self-driving cars have trouble accurately identifying objects because camera and LiDAR data are often processed separately and only for single moments, which makes it hard to understand moving scenes consistently.

What's the solution?

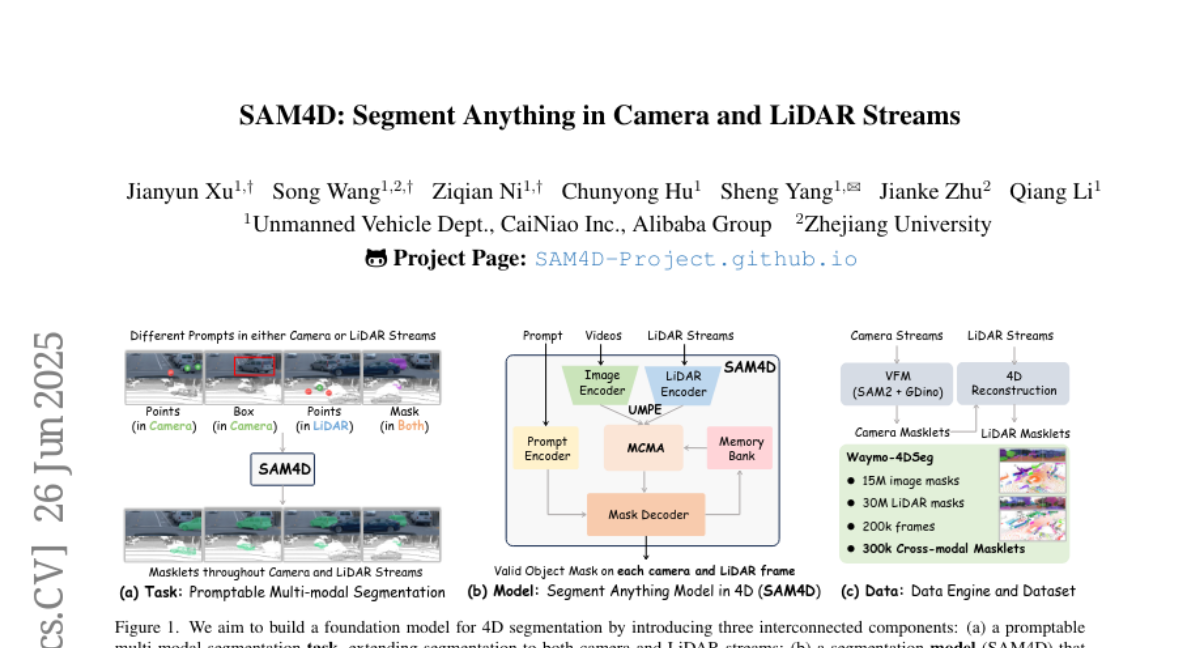

The researchers developed SAM4D, which combines camera and LiDAR information into a unified system that tracks objects over time using advanced memory techniques. They also created an automatic data engine to produce training labels by combining video and 3D sensor data, which improves the model’s learning.

Why it matters?

This matters because better object detection and tracking help self-driving cars navigate more safely and reliably, making autonomous driving technology more effective in real-world situations.

Abstract

SAM4D is a multi-modal and temporal foundation model for segmentation in autonomous driving using Unified Multi-modal Positional Encoding and Motion-aware Cross-modal Memory Attention, with a multi-modal automated data engine generating pseudo-labels.