Sentient Agent as a Judge: Evaluating Higher-Order Social Cognition in Large Language Models

Bang Zhang, Ruotian Ma, Qingxuan Jiang, Peisong Wang, Jiaqi Chen, Zheng Xie, Xingyu Chen, Yue Wang, Fanghua Ye, Jian Li, Yifan Yang, Zhaopeng Tu, Xiaolong Li

2025-05-09

Summary

This paper talks about SAGE, a system designed to test how well large language models can understand and respond to complex social situations, including emotions and thoughts, during conversations.

What's the problem?

The problem is that while AI models are getting better at chatting and answering questions, it's still unclear if they can truly understand deeper social cues, like empathy or reading between the lines, which are important for real human-like conversations.

What's the solution?

The researchers created SAGE, an automated framework that puts language models through simulated conversations where they have to deal with emotions and inner thoughts, just like people do. This system checks if the AI can handle these higher-level social skills in multi-turn dialogues.

Why it matters?

This matters because if AI can better understand emotions and social situations, it can become a more helpful and trustworthy assistant in areas like mental health, education, and customer support. Testing these abilities is a key step toward making AI more human-like and supportive in real-life interactions.

Abstract

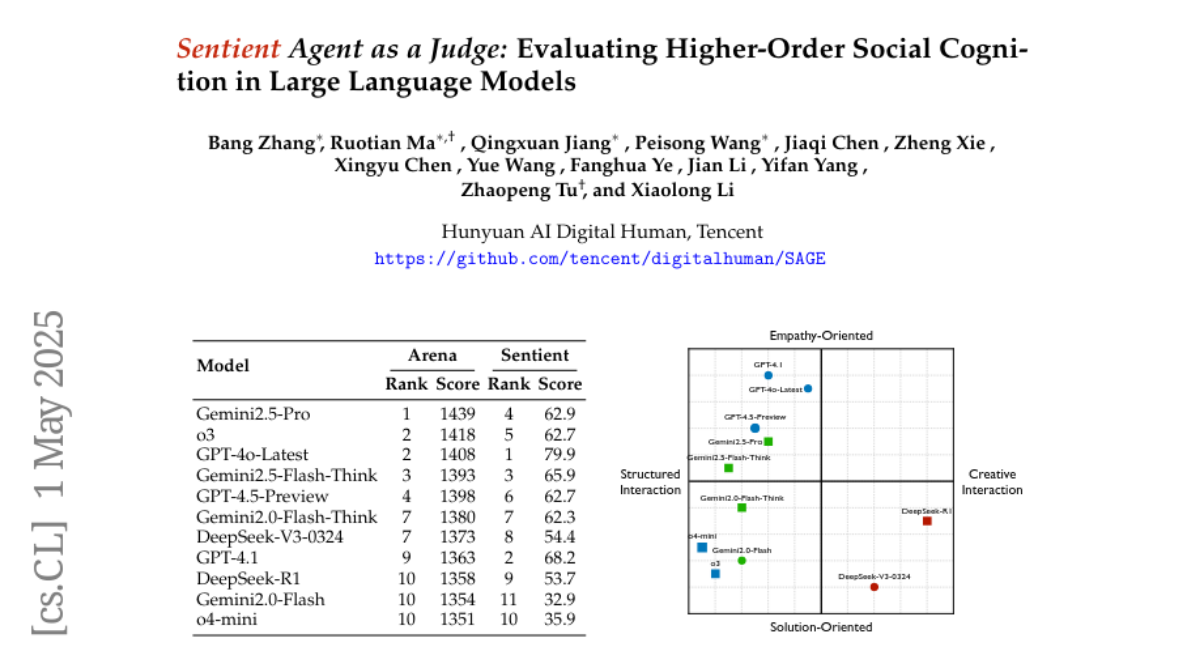

SAGE, an automated evaluation framework, assesses the higher-order social cognition and empathy of large language models through simulated human-like emotions and inner thoughts in multi-turn conversations.