SeqTex: Generate Mesh Textures in Video Sequence

Ze Yuan, Xin Yu, Yangtian Sun, Yuan-Chen Guo, Yan-Pei Cao, Ding Liang, Xiaojuan Qi

2025-07-08

Summary

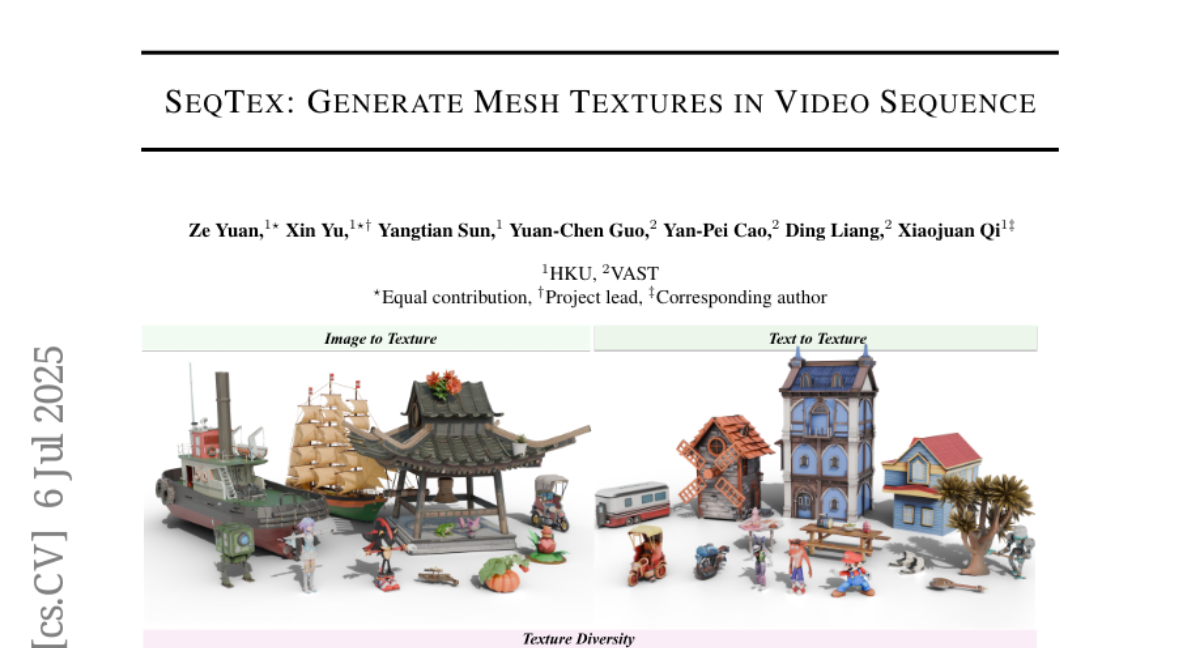

This paper talks about SeqTex, a framework that creates detailed textures for 3D models by using video sequences. It uses pretrained video models to produce high-quality texture maps that fit well on 3D surfaces and stay consistent over time.

What's the problem?

The problem is that generating good textures for 3D models from videos is challenging because textures need to be accurate and look smooth when the object moves or changes angles, and existing methods struggle with this consistency and quality.

What's the solution?

The researchers built SeqTex to directly generate UV texture maps from video frames using an end-to-end approach. By leveraging powerful pretrained video models, SeqTex produces textures that maintain consistency across different frames and generalize well to various objects and scenes.

Why it matters?

This matters because better texture generation improves the realism of 3D models used in gaming, movies, virtual reality, and other digital content, making virtual objects look more natural and appealing.

Abstract

SeqTex, an end-to-end framework, leverages pretrained video foundation models to generate high-fidelity UV texture maps directly, improving 3D consistency and generalization.