ShortV: Efficient Multimodal Large Language Models by Freezing Visual Tokens in Ineffective Layers

Qianhao Yuan, Qingyu Zhang, Yanjiang Liu, Jiawei Chen, Yaojie Lu, Hongyu Lin, Jia Zheng, Xianpei Han, Le Sun

2025-04-04

Summary

This paper is about making AI models that understand both images and text run faster by finding and freezing the parts that aren't doing much work when processing images.

What's the problem?

AI models that handle both images and text are really big and take a lot of computing power to run because they have to process a large number of visual tokens.

What's the solution?

The researchers found a way to identify the layers in the AI model that don't contribute much when processing images and then freeze those layers. This significantly reduces the amount of computation needed without sacrificing performance.

Why it matters?

This work matters because it can make these AI models more efficient, allowing them to be used on devices with less computing power or to process information faster.

Abstract

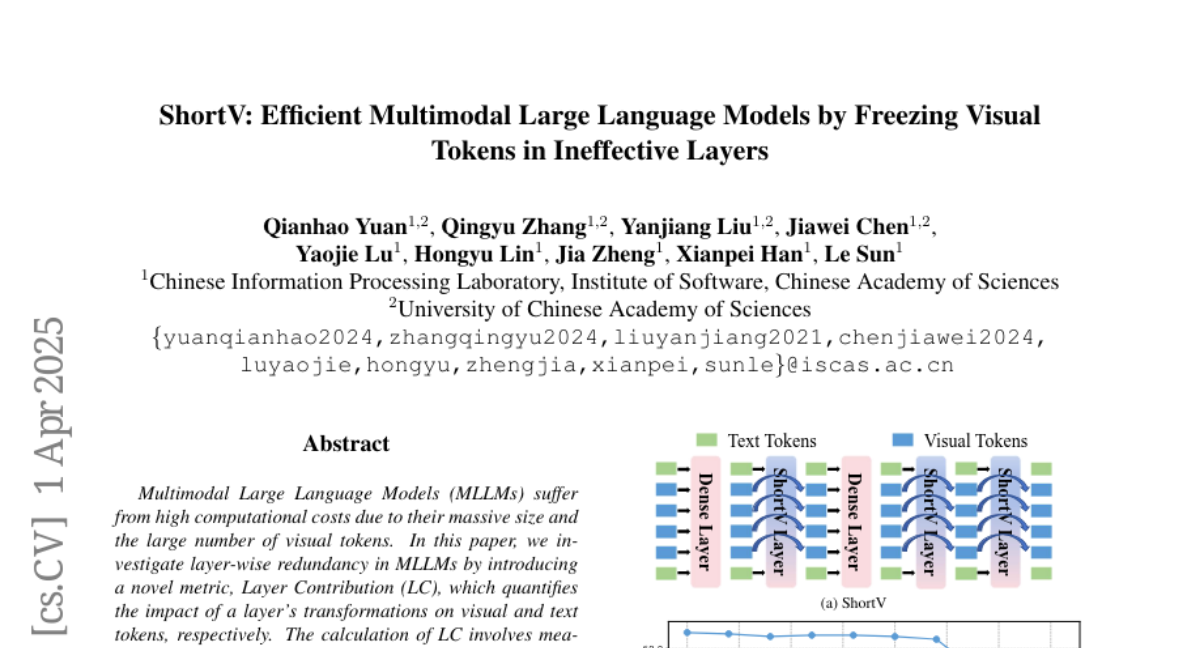

Multimodal Large Language Models (MLLMs) suffer from high computational costs due to their massive size and the large number of visual tokens. In this paper, we investigate layer-wise redundancy in MLLMs by introducing a novel metric, Layer Contribution (LC), which quantifies the impact of a layer's transformations on visual and text tokens, respectively. The calculation of LC involves measuring the divergence in model output that results from removing the layer's transformations on the specified tokens. Our pilot experiment reveals that many layers of MLLMs exhibit minimal contribution during the processing of visual tokens. Motivated by this observation, we propose ShortV, a training-free method that leverages LC to identify ineffective layers, and freezes visual token updates in these layers. Experiments show that ShortV can freeze visual token in approximately 60\% of the MLLM layers, thereby dramatically reducing computational costs related to updating visual tokens. For example, it achieves a 50\% reduction in FLOPs on LLaVA-NeXT-13B while maintaining superior performance. The code will be publicly available at https://github.com/icip-cas/ShortV