Soft Thinking: Unlocking the Reasoning Potential of LLMs in Continuous Concept Space

Zhen Zhang, Xuehai He, Weixiang Yan, Ao Shen, Chenyang Zhao, Shuohang Wang, Yelong Shen, Xin Eric Wang

2025-05-22

Summary

This paper talks about Soft Thinking, a new approach that helps large language models reason better by letting them use flexible, abstract ideas instead of just fixed words or symbols.

What's the problem?

AI models often have trouble with complex reasoning, especially in math or coding, because they usually think in strict, step-by-step ways and can't easily handle more abstract or in-between ideas.

What's the solution?

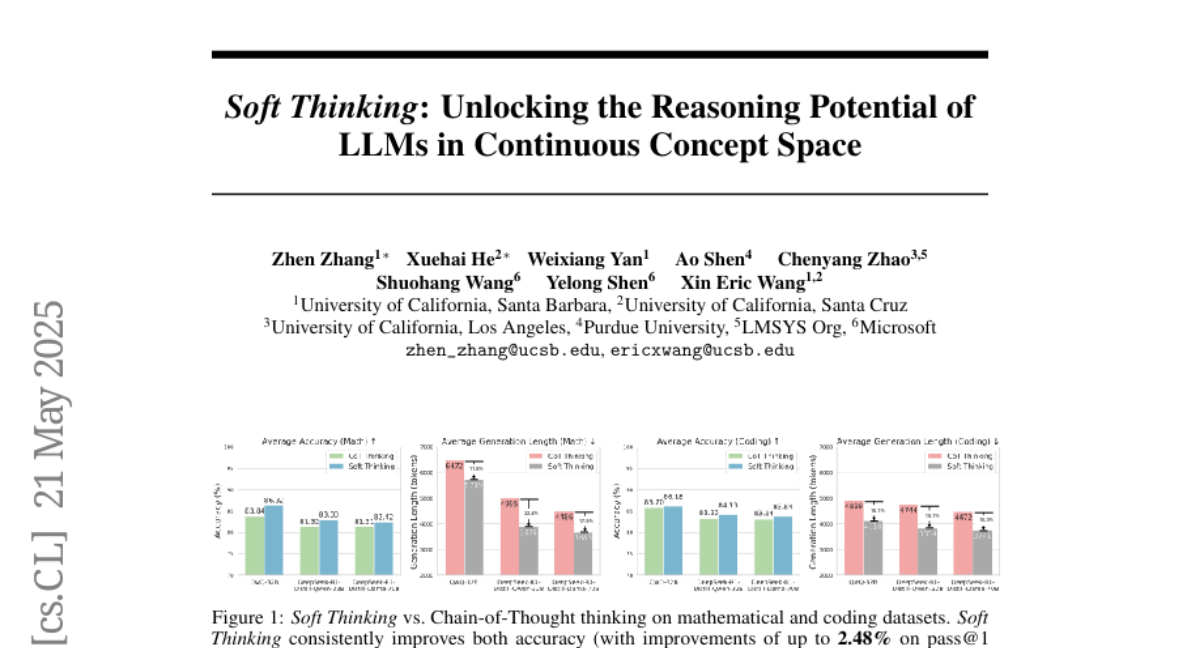

The researchers introduced Soft Thinking, which lets the AI use 'soft' concept tokens that exist in a continuous space, meaning the model can think in a more fluid and creative way without needing extra training, leading to better results on tough math and coding problems.

Why it matters?

This matters because it makes AI more powerful and efficient at solving complicated problems, helping students, programmers, and scientists get better answers faster.

Abstract

Soft Thinking, a training-free method, enhances reasoning by generating soft, abstract concept tokens in a continuous space, improving accuracy and efficiency in mathematical and coding benchmarks.