Sonata: Self-Supervised Learning of Reliable Point Representations

Xiaoyang Wu, Daniel DeTone, Duncan Frost, Tianwei Shen, Chris Xie, Nan Yang, Jakob Engel, Richard Newcombe, Hengshuang Zhao, Julian Straub

2025-03-21

Summary

This paper is about creating a better way for AI to understand 3D shapes, even with limited information.

What's the problem?

Current AI models that learn to recognize 3D shapes often focus on simple spatial features instead of truly understanding the shape.

What's the solution?

The researchers developed a method called Sonata that hides spatial information and emphasizes other features, forcing the AI to learn more robust representations.

Why it matters?

This work matters because it leads to AI that is better at understanding and processing 3D data, which is useful in robotics, computer vision, and other fields.

Abstract

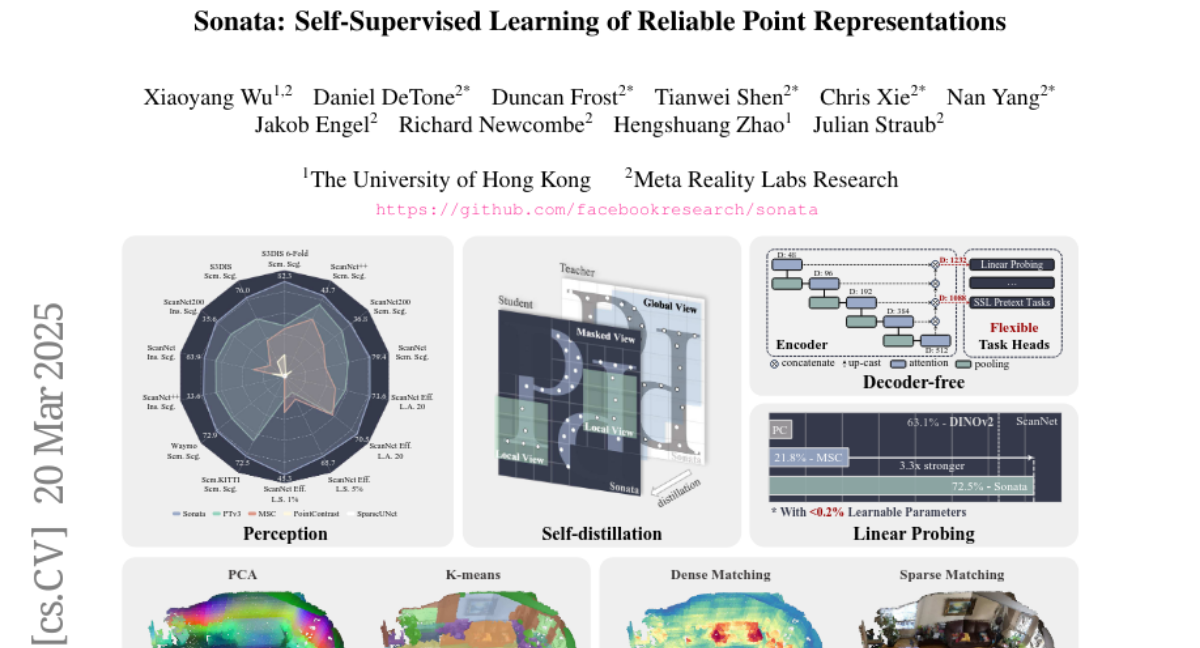

In this paper, we question whether we have a reliable self-supervised point cloud model that can be used for diverse 3D tasks via simple linear probing, even with limited data and minimal computation. We find that existing 3D self-supervised learning approaches fall short when evaluated on representation quality through linear probing. We hypothesize that this is due to what we term the "geometric shortcut", which causes representations to collapse to low-level spatial features. This challenge is unique to 3D and arises from the sparse nature of point cloud data. We address it through two key strategies: obscuring spatial information and enhancing the reliance on input features, ultimately composing a Sonata of 140k point clouds through self-distillation. Sonata is simple and intuitive, yet its learned representations are strong and reliable: zero-shot visualizations demonstrate semantic grouping, alongside strong spatial reasoning through nearest-neighbor relationships. Sonata demonstrates exceptional parameter and data efficiency, tripling linear probing accuracy (from 21.8% to 72.5%) on ScanNet and nearly doubling performance with only 1% of the data compared to previous approaches. Full fine-tuning further advances SOTA across both 3D indoor and outdoor perception tasks.